Multi-SimLex: A Large-Scale Evaluation of

Multilingual and Crosslingual Lexical

Semantic Similarity

Ivan Vuli´c♠

Language Technology Lab

University of Cambridge

iv250@cam.ac.uk

Simon Baker♠

Language Technology Lab

University of Cambridge

sb895@cam.ac.uk

Edoardo Maria Ponti♠

Language Technology Lab

University of Cambridge

ep490@cam.ac.uk

Ulla Petti

Language Technology Lab

University of Cambridge

ump20@cam.ac.uk

Ira Leviant

Faculty of Industrial Engineering and

Management, Technion, IIT

ira.leviant@campus.technion.ac.il

Kelly Wing

Language Technology Lab

University of Cambridge

lkw33cam@gmail.com

Olga Majewska

Language Technology Lab

University of Cambridge

om304@cam.ac.uk

All data are available at https://multisimlex.com/

♠ Equal contribution.

Submission received: 11 March 2020; revised version received: 17 July 2020; accepted for publication:

3 October 2020.

https://doi.org/10.1162/COLI a 00391

© 2020 Association for Computational Linguistics

Published under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International

(CC BY-NC-ND 4.0) license

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

Eden Bar

Faculty of Industrial Engineering and

Management, Technion, IIT

edenb@campus.technion.ac.il

Matt Malone

Language Technology Lab

University of Cambridge

mm2289@cam.ac.uk

Thierry Poibeau

LATTICE Lab, CNRS and ENS/PSL and

Univ. Sorbonne Nouvelle

thierry.poibeau@ens.fr

Roi Reichart

Faculty of Industrial Engineering and

Management, Technion, IIT

roiri@ie.technion.ac.il

Anna Korhonen

Language Technology Lab

University of Cambridge

alk23@cam.ac.uk

We introduce Multi-SimLex, a large-scale lexical resource and evaluation benchmark covering

data sets for 12 typologically diverse languages, including major languages (e.g., Mandarin

Chinese, Spanish, Russian) as well as less-resourced ones (e.g., Welsh, Kiswahili). Each lan-

guage data set is annotated for the lexical relation of semantic similarity and contains 1,888

semantically aligned concept pairs, providing a representative coverage of word classes (nouns,

verbs, adjectives, adverbs), frequency ranks, similarity intervals, lexical fields, and concreteness

levels. Additionally, owing to the alignment of concepts across languages, we provide a suite

of 66 crosslingual semantic similarity data sets. Because of its extensive size and language

coverage, Multi-SimLex provides entirely novel opportunities for experimental evaluation and

analysis. On its monolingual and crosslingual benchmarks, we evaluate and analyze a wide array

of recent state-of-the-art monolingual and crosslingual representation models, including static

and contextualized word embeddings (such as fastText, monolingual and multilingual BERT,

XLM), externally informed lexical representations, as well as fully unsupervised and (weakly)

supervised crosslingual word embeddings. We also present a step-by-step data set creation

protocol for creating consistent, Multi-Simlex–style resources for additional languages. We make

these contributions—the public release of Multi-SimLex data sets, their creation protocol, strong

baseline results, and in-depth analyses which can be helpful in guiding future developments in

multilingual lexical semantics and representation learning—available via a Web site that will

encourage community effort in further expansion of Multi-Simlex to many more languages.

Such a large-scale semantic resource could inspire significant further advances in NLP across

languages.

848

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

1. Introduction

Multi-SimLex

The lack of annotated training and evaluation data for many tasks and domains hinders

the development of computational models for the majority of the world’s languages

(Snyder and Barzilay 2010; Adams et al. 2017; Ponti et al. 2019a; Joshi et al. 2020). The

necessity to guide and advance multilingual and crosslingual NLP through annotation

efforts that follow crosslingually consistent guidelines has been recently recognized

by collaborative initiatives such as the Universal Dependency (UD) project (Nivre

et al. 2019). The latest version of UD (as of July 2020) covers about 90 languages.

Crucially, this resource continues to steadily grow and evolve through the contribu-

tions of annotators from across the world, extending the UD’s reach to a wide array

of typologically diverse languages. Besides steering research in multilingual parsing

(Zeman et al. 2018; Kondratyuk and Straka 2019; Doitch et al. 2019) and crosslingual

parser transfer (Rasooli and Collins 2017; Lin et al. 2019; Rotman and Reichart 2019),

the consistent annotations and guidelines have also enabled a range of insightful com-

parative studies focused on the languages’ syntactic (dis)similarities (Chen and Gerdes

2017; Bjerva and Augenstein 2018; Bjerva et al. 2019; Ponti et al. 2018a; Pires, Schlinger,

and Garrette 2019).

Inspired by the UD work and its substantial impact on research in (multilingual)

syntax, in this article we introduce Multi-SimLex, a suite of manually and consistently

annotated semantic data sets for 12 different languages, focused on the fundamental

lexical relation of semantic similarity on a continuous scale (i.e., gradience/strength of

semantic similarity) (Budanitsky and Hirst 2006; Hill, Reichart, and Korhonen 2015). For

any pair of words, this relation measures whether (and to what extent) their referents

share the same (functional) features (e.g., lion – cat), as opposed to general cognitive

association (e.g., lion – zoo) captured by co-occurrence patterns in texts (i.e., the dis-

tributional information).1 Data sets that quantify the strength of semantic similarity

between concept pairs such as SimLex-999 (Hill, Reichart, and Korhonen 2015) or

SimVerb-3500 (Gerz et al. 2016) have been instrumental in improving models for distri-

butional semantics and representation learning. Discerning between semantic similarity

and relatedness/association is not only crucial for theoretical studies on lexical seman-

tics (see §2), but has also been shown to benefit a range of language understanding

tasks in NLP. Examples include dialog state tracking (Mrkˇsi´c et al. 2017; Ren et al. 2018),

spoken language understanding (Kim et al. 2016; Kim, de Marneffe, and Fosler-Lussier

2016), text simplification (Glavaˇs and Vuli´c 2018; Ponti et al. 2018b; Lauscher et al. 2019),

and dictionary and thesaurus construction (Cimiano, Hotho, and Staab 2005; Hill et al.

2016).

Despite the proven usefulness of semantic similarity data sets, they are available

only for a small and typologically narrow sample of resource-rich languages such as

German, Italian, and Russian (Leviant and Reichart 2015), whereas some language

types and low-resource languages typically lack similar evaluation data. Even if some

resources do exist, they are limited in their size (e.g., 500 pairs in Turkish [Ercan and

Yıldız 2018], 500 in Farsi [Camacho-Collados et al. 2017], or 300 in Finnish [Venekoski

and Vankka 2017]) and coverage (e.g., all data sets that originated from the original

English SimLex-999 contain only high-frequent concepts, and are dominated by nouns).

This is why, as our departure point, we introduce a larger and more comprehensive

English word similarity data set spanning 1,888 concept pairs (see §4).

1 This lexical relation is, somewhat imprecisely, also termed true or pure semantic similarity (Hill, Reichart,

and Korhonen 2015; Kiela, Hill, and Clark 2015); see the ensuing discussion in §2.1.

849

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

Most importantly, semantic similarity data sets in different languages have been

created using heterogeneous construction procedures with different guidelines for

translation and annotation, as well as different rating scales. For instance, some data

sets were obtained by directly translating the English SimLex-999 in its entirety (Leviant

and Reichart 2015; Mrkˇsi´c et al. 2017) or in part (Venekoski and Vankka 2017). Other data

sets were created from scratch (Ercan and Yıldız 2018) and yet others sampled English

concept pairs differently from SimLex-999 and then translated and reannotated them

in target languages (Camacho-Collados et al. 2017). This heterogeneity makes these

data sets incomparable and precludes systematic crosslinguistic analyses. In this arti-

cle, consolidating the lessons learned from previous data set construction paradigms,

we propose a carefully designed translation and annotation protocol for developing

monolingual Multi-SimLex data sets with aligned concept pairs for typologically di-

verse languages. We apply this protocol to a set of 12 languages, including a mixture of

major languages (e.g., Mandarin, Russian, and French) as well as several low-resource

ones (e.g., Kiswahili, Welsh, and Yue Chinese). We demonstrate that our proposed data

set creation procedure yields data with high inter-annotator agreement rates (e.g., the

average mean inter-annotator agreement over all 12 languages is Spearman’s ρ = 0.740,

ranging from ρ = 0.667 for Russian to ρ = 0.812 for French).

The unified construction protocol and alignment between concept pairs enables a

series of quantitative analyses. Preliminary studies on the influence that polysemy and

crosslingual variation in lexical categories (see §2.3) have on similarity judgments are

provided in §5. Data created according to Multi-SimLex protocol also allow for probing

into whether similarity judgments are universal across languages, or rather depend on

linguistic affinity (in terms of linguistic features, phylogeny, and geographical location).

We investigate this question in §5.4. Naturally, Multi-SimLex data sets can be used as

an intrinsic evaluation benchmark to assess the quality of lexical representations based

on monolingual, joint multilingual, and transfer learning paradigms. We conduct a

systematic evaluation of several state-of-the-art representation models in §7, showing

that there are large gaps between human and system performance in all languages. The

proposed construction paradigm also supports the automatic creation of 66 crosslingual

Multi-SimLex data sets by interleaving the monolingual ones. We outline the construc-

tion of the crosslingual data sets in §6, and then present a quantitative evaluation of a

series of cutting-edge crosslingual representation models on this benchmark in §8.

Contributions. We now summarize the main contributions of this work:

1)

Building on lessons learned from prior work, we create a more

comprehensive lexical semantic similarity data set for the English

language spanning a total of 1,888 concept pairs balanced with respect to

similarity, frequency, and concreteness, and covering four word classes:

nouns, verbs, adjectives and, for the first time, adverbs. This data set

serves as the main source for the creation of equivalent data sets in several

other languages.

2) We present a carefully designed and rigorous language-agnostic

translation and annotation protocol. These well-defined guidelines will

facilitate the development of future Multi-SimLex data sets for other

languages. The proposed protocol eliminates some crucial issues with

prior efforts focused on the creation of multilingual semantic resources,

850

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

Multi-SimLex

namely: i) limited coverage; ii) heterogeneous annotation guidelines; and

iii) concept pairs that are semantically incomparable across different

languages.

3) We offer to the community manually annotated evaluation sets of 1,888

concept pairs across 12 typologically diverse languages, and 66 large

crosslingual evaluation sets. To the best of our knowledge, Multi-SimLex is

the most comprehensive evaluation resource to date focused on the

relation of semantic similarity.

4) We benchmark a wide array of recent state-of-the-art monolingual and

crosslingual word representation models across our sample of languages.

The results can serve as strong baselines that lay the foundation for future

improvements.

5) We present a first large-scale evaluation study on the ability of encoders

pretrained on language modeling (such as BERT [Devlin et al. 2019] and

XLM [Conneau and Lample 2019]) to reason over word-level semantic

similarity in different languages. To our surprise, the results show that

monolingual pretrained encoders, even when presented with word types

out of context, are sometimes competitive with static word embedding

models such as fastText (Bojanowski et al. 2017) or word2vec (Mikolov

et al. 2013). The results also reveal a huge gap in performance between

massively multilingual pretrained encoders and language-specific

encoders in favor of the latter: Our findings support other recent empirical

evidence related to the “curse of multilinguality” (Conneau et al. 2019;

Bapna and Firat 2019) in representation learning.

6) We make all of these resources available on a Web site that facilitates easy

creation, submission, and sharing of Multi-Simlex–style data sets for a

larger number of languages. We hope that this will yield an even larger

repository of semantic resources that inspire future advances in NLP

within and across languages.

In light of the success of UD (Nivre et al. 2019), we hope that our initiative will

instigate a collaborative public effort with established and clear-cut guidelines that will

result in additional Multi-SimLex data sets in a large number of languages in the near

future. Moreover, we hope that it will provide means to advance our understanding

of distributional and lexical semantics across a large number of languages. All mono-

lingual and crosslingual Multi-SimLex data sets—along with detailed translation and

annotation guidelines—are available online at: https://multisimlex.com/.

2. Lexical Semantic Similarity

2.1 Similarity and Association

The focus of the Multi-SimLex initiative is on the lexical relation of “true/pure” semantic

similarity, as opposed to the broader conceptual association. For any pair of words, this

relation measures whether their referents share the same features. For instance, graffiti

851

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

and frescos are similar to the extent that they are both forms of painting and appear

on walls. This relation can be contrasted with the cognitive association between two

words, which often depends on how much their referents interact in the real world,

or are found in the same situations. For instance, a painter is easily associated with

frescos, although they lack any physical commonalities. Association is also known in the

literature under other names: relatedness (Budanitsky and Hirst 2006), topical similarity

(McKeown et al. 2002), and domain similarity (Turney 2012).

Semantic similarity and association overlap to some degree, but do not coincide

(Kiela, Hill, and Clark 2015; Vuli´c, Kiela, and Korhonen 2017). In fact, there exist plenty

of pairs that are intuitively associated but not similar. Pairs where the converse is true

can also be encountered, although more rarely. An example are synonyms where a word

is common and the other infrequent, such as to seize and to commandeer. Hill, Reichart,

and Korhonen (2015) revealed that although similarity measures based on the WordNet

graph (Wu and Palmer 1994) and human judgments of association in the University

of South Florida Free Association Database (Nelson, McEvoy, and Schreiber 2004) do

correlate, a number of pairs follow opposite trends. Several studies on human cognition

also point in the same direction. For instance, semantic priming can be triggered by

similar words without association (Lucas 2000). On the other hand, a connection with

cue words is established more quickly for topically related words than for similar words

in free association tasks (De Deyne and Storms 2008).

A key property of semantic similarity is its gradience: Pairs of words can be similar

to a different degree. On the other hand, the relation of synonymy is binary: Pairs of

words are synonyms if they can be substituted in all contexts (or most contexts, in a

looser sense); otherwise they are not. Although synonyms can be conceived as lying

on one extreme of the semantic similarity continuum, it is crucial to note that their

definition is stated in purely relational terms, rather than invoking their referential

properties (Lyons 1977; Cruse 1986; Coseriu 1967). This makes behavioral studies on

semantic similarity fundamentally different from lexical resources like WordNet (Miller

1995), which include paradigmatic relations (such as synonymy).

2.2 Similarity for NLP: Intrinsic Evaluation and Semantic Specialization

The ramifications of the distinction between similarity and association are profound

for distributional semantics. This paradigm of lexical semantics is grounded in the

distributional hypothesis, formulated by Firth (1957) and Harris (1951). According to

this hypothesis, the meaning of a word can be recovered empirically from the contexts

in which it occurs within a collection of texts. Because both pairs of topically related

words and pairs of purely similar words tend to appear in the same contexts, their asso-

ciated meaning confounds the two distinct relations (Hill, Reichart, and Korhonen 2015;

Schwartz, Reichart, and Rappoport 2015; Vuli´c et al. 2017b). As a result, distributional

methods obscure a crucial facet of lexical meaning.

This limitation also reflects onto word embeddings (WEs), representations of words

as low-dimensional vectors that have become indispensable for a wide range of NLP

applications (Collobert et al. 2011; Chen and Manning 2014; Melamud et al. 2016, inter

alia). In particular, it involves both static WEs learned from co-occurrence patterns

(Mikolov et al. 2013; Levy and Goldberg 2014; Bojanowski et al. 2017) and contextualized

WEs learned from modeling word sequences (Peters et al. 2018; Devlin et al. 2019,

inter alia). As a result, in the induced representations, geometrical closeness (measured,

e.g., through cosine distance) conflates genuine similarity with broad relatedness. For

852

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

Multi-SimLex

instance, the vectors for antonyms such as sober and drunk, by definition dissimilar,

might be neighbors in the semantic space under the distributional hypothesis. Similar

to work on distributional representations that predated the WE era (Sahlgren 2006),

Turney (2012), Kiela and Clark (2014), and Melamud et al. (2016) demonstrated that

different choices of hyperparameters in WE algorithms (such as context window) em-

phasize different relations in the resulting representations. Likewise, Agirre et al. (2009)

and Levy and Goldberg (2014) discovered that WEs learned from texts annotated with

syntactic information mirror similarity better than simple local bag-of-words neighbor-

hoods.

The failure of WEs to capture semantic similarity, in turn, affects model performance

in several NLP applications where such knowledge is crucial. In particular, Natural

Language Understanding tasks such as statistical dialog modeling, text simplification,

or semantic text similarity (Mrkˇsi´c et al. 2016; Kim et al. 2016; Ponti et al. 2019c), among

others, suffer the most. As a consequence, resources providing clean information on

semantic similarity are key in mitigating the side effects of the distributional signal. In

particular, such databases can be used for the intrinsic evaluations of specific WE models

as a proxy for their reliability for downstream applications (Collobert and Weston 2008;

Baroni and Lenci 2010; Hill, Reichart, and Korhonen 2015); intuitively, the more WEs are

misaligned with human judgments of similarity, the more their performance on actual

tasks is expected to be degraded. Moreover, word representations can be specialized

(a.k.a. retrofitted) by disentangling word relations of similarity and association. In

particular, linguistic constraints sourced from external databases (such as synonyms

from WordNet) can be injected into WEs (Faruqui et al. 2015; Wieting et al. 2015; Mrkˇsi´c

et al. 2017; Lauscher et al. 2019; Kamath et al. 2019, inter alia) in order to enforce

a particular relation in a distributional semantic space while preserving the original

adjacency properties.

2.3 Similarity and Language Variation: Semantic Typology

In this work, we tackle the concept of (true and gradient) semantic similarity from a

multilingual perspective. Although the same meaning representations may be shared

by all human speakers at a deep cognitive level, there is no one-to-one mapping between

the words in the lexicons of different languages. This makes the comparison of similarity

judgments across languages difficult, because the meaning overlap of translationally

equivalent words is sometimes far less than exact. This results from the fact that the

way languages “partition” semantic fields is partially arbitrary (Trier 1931), although

constrained crosslingually by common cognitive biases (Majid et al. 2007). For instance,

consider the field of colors: English distinguishes between green and blue, whereas Murle

(South Sudan) has a single word for both (Kay and Maffi 2013).2

In general, semantic typology studies the variation in lexical semantics across the

world’s languages. According to Evans (2011), the ways languages categorize concepts

into the lexicon follow three main axes: 1) granularity: what is the number of categories

in a specific domain?; 2) boundary location: where do the lines marking different cate-

gories lie?; 3) grouping and dissection: what are the membership criteria of a category;

which instances are considered to be more prototypical? Different choices with respect

2 Note that there is also inherent intra-language variability that can affect concept categorization, e.g.,

Vejdemo (2018) studies the monolingual variability in the domain of colors. Our annotation protocols and

models do not specifically cater to nor measure the fine-grained intra-language phenomenon.

853

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

to these axes lead to different lexicalization patterns.3 For instance, distinct senses in a

polysemous word in English, such as skin (referring to both the body and fruit), may be

assigned separate words in other languages such as Italian pelle and buccia, respectively

(Rzymski et al. 2020). We later analyze whether similarity scores obtained from native

speakers also loosely follow the patterns described by semantic typology.

3. Previous Work and Evaluation Data

Word Pair Data Sets. Rich expert-created resources such as WordNet (Miller 1995;

Fellbaum 1998), VerbNet (Kipper Schuler 2005; Kipper et al. 2008), or FrameNet (Baker,

Fillmore, and Lowe 1998) encode a wealth of semantic and syntactic information, but are

expensive and time-consuming to create. The scale of this problem becomes multiplied

by the number of languages in consideration. Therefore, crowd-sourcing with non-

expert annotators has been adopted as a quicker alternative to produce smaller and

more focused semantic resources and evaluation benchmarks. This alternative practice

has had a profound impact on distributional semantics and representation learning

(Hill, Reichart, and Korhonen 2015). Whereas some prominent English word pair data

sets such as WordSim-353 (Finkelstein et al. 2002), MEN (Bruni, Tran, and Baroni 2014),

or Stanford Rare Words (Luong, Socher, and Manning 2013) did not discriminate be-

tween similarity and relatedness, the importance of this distinction was established

by Hill, Reichart, and Korhonen (2015) (see again the discussion in §2.1) through the

creation of SimLex-999. This inspired other similar data sets that focused on different

lexical properties. For instance, SimVerb-3500 (Gerz et al. 2016) provided similarity

ratings for 3,500 English verbs, whereas CARD-660 (Pilehvar et al. 2018) aimed at

measuring the semantic similarity of infrequent concepts.

Semantic Similarity Data Sets in Other Languages. Motivated by the impact of data sets

such as SimLex-999 and SimVerb-3500 on representation learning in English, a line of

related work put focus on creating similar resources in other languages. The dominant

approach is translating and reannotating the entire original English SimLex-999 data

set, as done previously for German, Italian, and Russian (Leviant and Reichart 2015),

Hebrew and Croatian (Mrkˇsi´c et al. 2017), and Polish (Mykowiecka, Marciniak, and

Rychlik 2018). Venekoski and Vankka (2017) applied this process only to a subset of

300 concept pairs from the English SimLex-999. On the other hand, Camacho-Collados

et al. (2017) sampled a new set of 500 English concept pairs to ensure wider topical

coverage and balance across similarity spectra, and then translated those pairs to Ger-

man, Italian, Spanish, and Farsi (SEMEVAL-500). A similar approach was followed

by Ercan and Yıldız (2018) for Turkish, by Huang et al. (2019) for Mandarin Chinese,

and by Sakaizawa and Komachi (2018) for Japanese. Netisopakul, Wohlgenannt, and

Pulich (2019) translated the concatenation of SimLex-999, WordSim-353, and the En-

glish SEMEVAL-500 into Thai and then reannotated it. Finally, Barzegar et al. (2018)

translated English SimLex-999 and WordSim-353 to 11 resource-rich target languages

(German, French, Russian, Italian, Dutch, Chinese, Portuguese, Swedish, Spanish, Ara-

bic, Farsi), but they did not provide details concerning the translation process and the

3 More formally, colexification is a phenomenon when different meanings can be expressed by the same

word in a language (Franc¸ois 2008). For instance, the two senses that are distinguished in English as time

and weather are co-lexified in Croatian: the word vrijeme is used in both cases.

854

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

Multi-SimLex

resolution of translation disagreements. More importantly, they also did not reannotate

the translated pairs in the target languages. As we discussed in §2.3 and reiterate

later in §5, semantic differences among languages can have a profound impact on the

annotation scores; particularly, we show in §5.4 that these differences even roughly

define language clusters based on language affinity.

A core issue with the current data sets concerns a lack of one unified procedure

that ensures the comparability of resources in different languages. Further, concept

pairs for different languages are sourced from different corpora (e.g., direct translation

of the English data versus sampling from scratch in the target language). Moreover,

the previous SimLex-based multilingual data sets inherit the main deficiencies of the

English original version, such as the focus on nouns and highly frequent concepts.

Finally, prior work mostly focused on languages that are widely spoken and do not

account for the variety of the world’s languages. Our long-term goal is devising a

standardized methodology to extend the coverage also to languages that are resource-

lean and/or typologically diverse (e.g., Welsh, Kiswahili, as in this work).

Multilingual Data Sets for Natural Language Understanding. The Multi-SimLex initiative

and corresponding data sets are also aligned with the recent efforts on procuring

multilingual benchmarks that can help advance computational modeling of natural lan-

guage understanding across different languages. For instance, pretrained multilingual

language models such as multilingual BERT (Devlin et al. 2019) or XLM (Conneau and

Lample 2019) are typically probed on XNLI test data (Conneau et al. 2018b) for cross-

lingual natural language inference. XNLI was created by translating examples from the

English MultiNLI data set, and projecting its sentence labels (Williams, Nangia, and

Bowman 2018). Other recent multilingual data sets target the task of question answering

based on reading comprehension: i) MLQA (Lewis et al. 2019) includes 7 languages;

ii) XQuAD (Artetxe, Ruder, and Yogatama 2019) 10 languages; and iii) TyDiQA (Clark

et al. 2020) 9 widely spoken typologically diverse languages. While MLQA and XQuAD

result from the translation from an English data set, TyDiQA was built independently

in each language. Another multilingual data set, PAWS-X (Yang et al. 2019), focused

on the paraphrase identification task and was created translating the original English

PAWS (Zhang, Baldridge, and He 2019) into 6 languages. XCOPA (Ponti et al. 2020) is a

crosslingual data set for the evaluation of crosslingual causal commonsense reasoning,

obtained through translation of the English COPA data (Roemmele, Bejan, and Gordon

2011) to 11 target languages. A large number of tasks have been recently integrated into

unified multilingual evaluation suites: XTREME (Hu et al. 2020) and XGLUE (Liang

et al. 2020). We believe that Multi-SimLex can substantially contribute to this endeavor

by offering a comprehensive multilingual benchmark for the fundamental lexical level

relation of semantic similarity. In future work, Multi-SimLex also offers an opportunity

to investigate the correlations between word-level semantic similarity and performance

in downstream tasks such as QA and NLI across different languages.

4. The Base for Multi-SimLex: Extending English SimLex-999

In this section, we discuss the design principles behind the English (ENG) Multi-SimLex

data set, which is the basis for all the Multi-SimLex data sets in other languages, as

detailed in §5. We first argue that a new, more balanced, and more comprehensive

evaluation resource for lexical semantic similarity in English is necessary. We then

describe how the 1,888 word pairs contained in the ENG Multi-SimLex were selected

855

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

in such a way as to represent various linguistic phenomena within a single integrated

resource.

Construction Criteria. The following criteria have to be satisfied by any high-quality

semantic evaluation resource, as argued by previous studies focused on the creation of

such resources (Hill, Reichart, and Korhonen 2015; Gerz et al. 2016; Vuli´c et al. 2017a;

Camacho-Collados et al. 2017, inter alia):

(C1) Representative and diverse. The resource must cover the full range of diverse

concepts occurring in natural language, including different word classes (e.g., nouns,

verbs, adjectives, adverbs), concrete and abstract concepts, a variety of lexical fields,

and different frequency ranges.

(C2) Clearly defined. The resource must provide a clear understanding of which

semantic relation exactly is annotated and measured, possibly contrasting it with other

relations. For instance, the original SimLex-999 and SimVerb-3500 explicitly focus on

true semantic similarity and distinguish it from broader relatedness captured by data

sets such as MEN (Bruni, Tran, and Baroni 2014) or WordSim-353 (Finkelstein et al.

2002).

(C3) Consistent and reliable. The resource must ensure consistent annotations obtained

from non-expert native speakers following simple and precise annotation guidelines.

In choosing the word pairs and constructing ENG Multi-SimLex, we adhere to these

requirements. Moreover, we follow good practices established by the research on related

resources. In particular, since the introduction of the original SimLex-999 data set (Hill,

Reichart, and Korhonen 2015), follow-up work has improved its construction protocol

across several aspects, including: 1) coverage of more lexical fields, for example, by

relying on a diverse set of Wikipedia categories (Camacho-Collados et al. 2017), 2)

infrequent/rare words (Pilehvar et al. 2018), 3) focus on particular word classes, for

example, verbs (Gerz et al. 2016), and 4) annotation quality control (Pilehvar et al. 2018).

Our goal is to make use of these improvements toward a larger, more representative,

and more reliable lexical similarity data set in English and, consequently, in all other

languages.

The Final Output: English Multi-SimLex. In order to ensure that criterion C1 is satisfied,

we consolidate and integrate the data already carefully sampled in prior work into a

single, comprehensive, and representative data set. This way, we can control for diver-

sity, frequency, and other properties while avoiding performing this time-consuming

selection process from scratch. Note that, on the other hand, the word pairs chosen for

English are scored from scratch as part of the entire Multi-SimLex annotation process,

introduced later in §5. We now describe the external data sources for the final set of

word pairs:

1)

Source: SimLex-999 (Hill, Reichart, and Korhonen 2015). The English

Multi-SimLex has been initially conceived as an extension of the original

SimLex-999 data set. Therefore, we include all 999 word pairs from

SimLex, which span 666 noun pairs, 222 verb pairs, and 111 adjective

pairs. While SimLex-999 already provides examples representing different

856

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

Multi-SimLex

2)

3)

4)

5)

POS classes, it does not have a sufficient coverage of different linguistic

phenomena: For instance, it contains only very frequent concepts, and it

does not provide a representative set of verbs (Gerz et al. 2016).

Source: SemEval-17: Task 2 (henceforth SEMEVAL-500; Camacho-Collados

et al. 2017). We start from the full data set of 500 concept pairs to extract a

total of 334 concept pairs for English Multi-SimLex a) which contain only

single-word concepts, b) which are not named entities, c) where POS tags

of the two concepts are the same, d) where both concepts occur in the

top 250K most frequent word types in the English Wikipedia, and e) which

do not already occur in SimLex-999. The original concepts were sampled

as to span all the 34 domains available as part of BabelDomains

(Camacho-Collados and Navigli 2017), which roughly correspond to the

main high-level Wikipedia categories. This ensures topical diversity in our

sub-sample.

Source: CARD-660 (Pilehvar et al. 2018). Sixty-seven word pairs are taken

from this data set focused on rare word similarity, applying the same

selection criteria a to e utilized for SEMEVAL-500. Words are controlled for

frequency based on their occurrence counts from the Google News data

and the ukWaC corpus (Baroni et al. 2009). CARD-660 contains some

words that are very rare (logboat), domain-specific (erythroleukemia), and

slang (2mrw), which might be difficult to translate and annotate across a

wide array of languages. Hence, we opt for retaining only the concept

pairs above the threshold of the top 250K most frequent Wikipedia

concepts, as above.

Source: SimVerb-3500 (Gerz et al. 2016) Because both CARD-660 and

SEMEVAL-500 are heavily skewed toward noun pairs, and nouns also

dominate the original SimLex-999, we also extract additional verb pairs

from the verb-specific similarity data set SimVerb-3500. We randomly

sample 244 verb pairs from SimVerb-3500 that represent all similarity

spectra. In particular, we add 61 verb pairs for each of the similarity

intervals: [0, 1.5), [1.5, 3), [3, 4.5), [4.5, 6]. Because verbs in SimVerb-3500

were originally chosen from VerbNet (Kipper, Snyder, and Palmer 2004;

Kipper et al. 2008), they cover a wide range of verb classes and their

related linguistic phenomena.

Source: University of South Florida (USF; Nelson, McEvoy, and Schreiber

2004) norms, the largest database of free association for English. In order to

improve the representation of different POS classes, we sample additional

adjectives and adverbs from the USF norms following the procedure

established by Hill, Reichart, and Korhonen (2015) and Gerz et al. (2016).

This yields an additional 122 adjective pairs, but only a limited number of

adverb pairs (e.g., later – never, now – here, once – twice). Therefore, we also

create a set of adverb pairs semi-automatically by sampling adjectives that

can be derivationally transformed into adverbs (e.g., adding the suffix -ly)

from the USF, and assessing the correctness of such derivation in WordNet.

The resulting pairs include, for instance, primarily – mainly, softly – firmly,

roughly – reliably, and so forth. We include a total of 123 adverb pairs into

the final English Multi-SimLex. Note that this is the first time that adverbs

are included into any semantic similarity data set.

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

857

Computational Linguistics

Volume 46, Number 4

Fulfillment of Construction Criteria. The final ENG Multi-SimLex data set spans 1,051 noun

pairs, 469 verb pairs, 245 adjective pairs, and 123 adverb pairs.4 As mentioned earlier,

the criterion C1 has been fulfilled by relying only on word pairs that already underwent

meticulous sampling processes in prior work, integrating them into a single resource.

As a consequence, Multi-SimLex allows for fine-grained analyses over different POS

classes, concreteness levels, similarity spectra, frequency intervals, relation types, mor-

phology, and lexical fields; and it also includes some challenging orthographically sim-

ilar examples (e.g., infection – inflection).5 We ensure that criteria C2 and C3 are satisfied

by using similar annotation guidelines as Simlex-999, SimVerb-3500, and SEMEVAL-

500 that explicitly target semantic similarity. In what follows, we outline the carefully

tailored process of translating and annotating Multi-SimLex data sets in all target

languages.

5. Multi-SimLex: Translation and Annotation

We now detail the development of the final Multi-SimLex resource, describing our lan-

guage selection process, as well as translation and annotation of the resource, including

the steps taken to ensure and measure the quality of this resource. We also provide key

data statistics and preliminary crosslingual comparative analyses.

Language Selection. Multi-SimLex comprises eleven languages in addition to English.

The main objective for our inclusion criteria has been to balance language prominence

(by number of speakers of the language) for maximum impact of the resource, while

simultaneously having a diverse suite of languages based on their typological features

(such as morphological type and language family). Table 1 summarizes key information

about the languages currently included in Multi-SimLex. We have included a mixture

of fusional, agglutinative, isolating, and introflexive languages that come from eight

different language families. This includes languages that are very widely used such

as Chinese Mandarin and Spanish, and low-resource languages such as Welsh and

Kiswahili. We acknowledge that, despite a good balance between typological diversity

and language prominence, the initial Multi-SimLex language sample still contains some

gaps as it does not cover languages from some language families and geographical

regions such as the Americas or Australia. This is mostly due to added difficulty for

the authors to reach trusted translators and annotators for these languages. This also

indicates why Multi-SimLex has been envisioned as a collaborative community project:

We hope to further include additional languages and inspire other researchers that work

more closely with under-resourced languages to contribute to the effort over the lifetime

of this project.

4 There is a very small number of adjective and verb pairs extracted from CARD-660 and SEMEVAL-500 as

well. For instance, the total number of verbs is 469, since we augment the original 222 SimLex-999 verb

pairs with 244 SimVerb-3500 pairs and 3 SEMEVAL-500 pairs; and similarly for adjectives.

5 Unlike SEMEVAL-500 and CARD-660, we do not explicitly control for the equal representation of concept

pairs across each similarity interval for several reasons: a) Multi-SimLex contains a substantially larger

number of concept pairs, so it is possible to extract balanced samples from the full data; b) such balance,

even if imposed on the English data set, would be distorted in all other monolingual and crosslingual

data sets; c) balancing over similarity intervals arguably does not reflect a true distribution “in the wild”

where most concepts are only loosely related or completely unrelated.

858

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

Multi-SimLex

Table 1

The list of 12 languages in the Multi-SimLex multilingual suite along with their corresponding

language family (IE = Indo-European), broad morphological type, and their ISO 639-3 code.

The number of speakers is based on the total count of L1 and L2 speakers, according to

ethnologue.com.

Language

ISO 639-3

Family

Type

# Speakers

Chinese Mandarin

Welsh

English

Estonian

Finnish

French

Hebrew

Polish

Russian

Spanish

Kiswahili

Yue Chinese

CMN

CYM

ENG

EST

FIN

FRA

HEB

POL

RUS

SPA

SWA

YUE

Sino-Tibetan

IE: Celtic

IE: Germanic

Uralic

Uralic

IE: Romance

Afro-Asiatic

IE: Slavic

IE: Slavic

IE: Romance

Niger-Congo Agglutinative

Sino-Tibetan

Isolating

Fusional

Fusional

Agglutinative

Agglutinative

Fusional

Introflexive

Fusional

Fusional

Fusional

Isolating

1.116 B

0.7 M

1.132 B

1.1 M

5.4 M

280 M

9 M

50 M

260 M

534.3 M

98 M

73.5 M

The work on data collection can be divided into two crucial phases:

1)

A translation phase where the extended English language data set with

1,888 pairs (described in §4) is translated into eleven target languages, and

2) an annotation phase where human raters scored each pair in the

translated set as well as the English set. Detailed guidelines for both

phases are available online at: https://multisimlex.com.6

5.1 Word Pair Translation

Translators for each target language were instructed to find direct or approximate

translations for the 1,888 word pairs that satisfy the following rules. (1) All pairs in

the translated set must be unique (i.e., no duplicate pairs); (2) Translating two words

from the same English pair into the same word in the target language is not allowed

(e.g., it is not allowed to translate car and automobile to the same Spanish word coche).

(3) The translated pairs must preserve the semantic relations between the two words

when possible. This means that, when multiple translations are possible, the translation

that best conveys the semantic relation between the two words found in the original

English pair is selected. (4) If it is not possible to use a single-word translation in the

target language, then a multiword expression can be used to convey the nearest possible

semantics given the above points (e.g., the English word homework is translated into the

Polish multiword expression praca domowa).

Satisfying these rules when finding appropriate translations for each pair—while

keeping to the spirit of the intended semantic relation in the English version—is

not always straightforward. For instance, kinship terminology in Sinitic languages

(Mandarin and Yue) uses different terms depending on whether the family member

is older or younger, and whether the family member comes from the mother’s side or

6 All translators and annotators are native speakers of each target language, who are either bilingual or

proficient in English. They were reached through personal contacts of the authors or were recruited from

the pool of international students at the University of Cambridge.

859

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

Table 2

Inter-translator agreement (% of matched translated words) by independent translators using a

randomly selected 100-pair English sample from the Multi-SimLex data set, and the

corresponding 100-pair samples from the other data sets.

Languages: CMN

CYM EST

FIN FRA

HEB

POL

RUS

SPA SWA YUE Avg

Nouns

Adjectives

Verbs

Adverbs

Overall

84.5

88.5

88.0

92.9

86.5

80.0

88.5

74.0

100.0

81.0

90.0

61.5

82.0

57.1

82.0

87.3

73.1

76.0

78.6

82.0

78.2

69.2

78.0

92.9

78.0

98.2

100.0

100.0

100.0

99.0

90.0

84.6

74.0

85.7

85.0

95.5

100.0

100.0

100.0

97.5

85.5

69.2

74.0

85.7

80.5

80.0

88.5

76.0

85.7

81.0

77.3

84.6

86.0

78.6

80.5

86.0

82.5

82.5

87.0

84.8

(older brother) or

the father’s side. In Mandarin, brother has no direct translation and can be translated as

(younger brother). Therefore, in such cases, the translators

either:

are asked to choose the best option given the semantic context (relation) expressed by

the pair in English; otherwise, to select one of the translations arbitrarily. This is also

used to remove duplicate pairs in the translated set, by differentiating the duplicates

using a variant at each instance. Further, many translation instances were resolved using

near-synonymous terms in the translation. For example, the words in the pair: wood –

timber can only be directly translated in Estonian to puit, and are not distinguishable.

Therefore, the translators approximated the translation for timber to the compound

noun puitmaterjal (literally: wood material) in order to produce a valid pair in the target

language. In some cases, a less formal yet frequent variant is used as a translation. For

example, the words in the pair physician – doctor both translate to the same word in

Estonian (arst); the less formal word doktor is used as a translation of doctor to generate

a valid pair.

We measure the quality of the translated pairs by using a random sample set

of 100 pairs (from the 1,888 pairs) to be translated by an independent translator for

each target language. The sample is proportionally stratified according to the part-of-

speech categories. The independent translator is given identical instructions to the main

translator; we then measure the percentage of matched translated words between the

two translations of the sample set. Table 2 summarizes the inter-translator agreement

results for all languages and by part-of-speech subsets. Overall across all languages, the

agreement is 84.8%, which is similar to prior work (Camacho-Collados et al. 2017; Vuli´c,

Ponzetto, and Glavaˇs 2019).

5.2 Guidelines and Word Pair Scoring

Across all languages, 145 human annotators were asked to score all 1,888 pairs (in their

given language). We finally collect at least ten valid annotations for each word pair in

each language. All annotators were required to abide by the following instructions:

Each annotator must assign an integer score between 0 and 6 (inclusive)

indicating how semantically similar the two words in a given pair are. A

score of 6 indicates very high similarity (i.e., perfect synonymy), and zero

indicates no similarity.

Each annotator must score the entire set of 1,888 pairs in the data set. The

pairs must not be shared between different annotators.

1.

2.

860

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

Multi-SimLex

3.

4.

Annotators are able to break the workload over a period of approximately

2–3 weeks, and are able to use external sources (e.g., dictionaries, thesauri,

WordNet) if required.

Annotators are kept anonymous, and are not able to communicate with

each other during the annotation process.

The selection criteria for the annotators required that all annotators must be native

speakers of the target language. Preference to annotators with university education was

given, but not required. Annotators were asked to complete a spreadsheet containing

the translated pairs of words, as well as the part-of-speech, and a column to enter the

score. The annotators did not have access to the original pairs in English.

To ensure the quality of the collected ratings, we have used an adjudication protocol

similar to the one proposed and validated by Pilehvar et al. (2018). It consists of the

following three rounds:

Round 1: All annotators are asked to follow the instructions outlined above, and to rate

all 1,888 pairs with integer scores between 0 and 6.

Round 2: We compare the scores of all annotators and identify the pairs for each

annotator that have shown the most disagreement. We ask the annotators to reconsider

the assigned scores for those pairs only. The annotators may choose to either change

or keep the scores. As in the case with Round 1, the annotators have no access to the

scores of the other annotators, and the process is anonymous. This process gives a

chance for annotators to correct errors or reconsider their judgments, and has been

shown to be very effective in reaching consensus, as reported by Pilehvar et al. (2018).

We used a very similar procedure as Pilehvar et al. (2018) to identify the pairs with the

most disagreement; for each annotator, we marked the ith pair if the rated score si falls

within: si ≥ µi + 1.5 or si ≤ µi − 1.5, where µi is the mean of the other annotators’ scores.

Round 3: We compute the average agreement for each annotator (with the other

annotators) by measuring the average Spearman’s correlation against all other

annotators. We discard the scores of annotators that have shown the least average

agreement with all other annotators, while we maintain at least ten annotators per

language by the end of this round. The actual process is done in multiple iterations:

(S1) we measure the average agreement for each annotator with every other annotator

(this corresponds to the APIAA measure, see later); (S2) if we still have more than 10

valid annotators and the lowest average score is higher than in the previous iteration,

we remove the lowest one, and rerun S1. Table 3 shows the number of annotators at

both the start (Round 1) and end (Round 3) of our process for each language.

We measure the agreement between annotators using two metrics, average pair-

wise inter-annotator agreement (APIAA), and average mean inter-annotator agreement

(AMIAA). Both of these use Spearman’s correlation (ρ) between annotators’ scores, the

only difference is how they are averaged. They are computed as follows:

1)APIAA =

2 (cid:80)

i,j ρ(si, sj)

N(N − 1)

2)AMIAA =

(cid:80)

i ρ(si, µi)

N

, where: µi =

(cid:80)

j,j(cid:54)=i sj

N − 1

(1)

861

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

Table 3

Number of human annotators. R1 = Annotation Round 1, R3 = Round 3.

Languages:

R1: Start

R3: End

CMN CYM ENG

EST

FIN FRA HEB

POL

RUS

SPA

SWA

YUE

13

11

12

10

14

13

12

10

13

10

10

10

11

10

12

10

12

10

12

10

11

10

13

11

Table 4

Average pairwise inter-annotator agreement (APIAA). A score of 0.6 and above indicates strong

agreement.

Languages:

Nouns

Adjectives

Verbs

Adverbs

Overall

CMN

CYM

ENG

EST

FIN

FRA

HEB

POL

RUS

SPA

SWA

YUE

0.661

0.757

0.694

0.699

0.680

0.622

0.698

0.604

0.593

0.619

0.659

0.823

0.707

0.695

0.698

0.558

0.695

0.580

0.579

0.583

0.647

0.721

0.644

0.646

0.646

0.698

0.741

0.691

0.595

0.697

0.538

0.683

0.615

0.561

0.572

0.606

0.699

0.593

0.543

0.609

0.524

0.625

0.555

0.535

0.530

0.582

0.640

0.588

0.563

0.576

0.626

0.658

0.631

0.562

0.623

0.727

0.785

0.760

0.716

0.733

Table 5

Average mean inter-annotator agreement (AMIAA). A score of 0.6 and above indicates strong

agreement.

Languages:

Nouns

Adjectives

Verbs

Adverbs

Overall

CMN

CYM

ENG

EST

FIN

FRA

HEB

POL

RUS

SPA

SWA

YUE

0.757

0.800

0.774

0.749

0.764

0.747

0.789

0.733

0.693

0.742

0.766

0.865

0.811

0.777

0.794

0.696

0.790

0.715

0.697

0.715

0.766

0.792

0.757

0.748

0.760

0.809

0.831

0.808

0.729

0.812

0.680

0.754

0.720

0.645

0.699

0.717

0.792

0.722

0.655

0.723

0.657

0.737

0.690

0.608

0.667

0.710

0.743

0.710

0.671

0.703

0.725

0.686

0.702

0.623

0.710

0.804

0.811

0.784

0.716

0.792

where ρ(si, sj) is the Spearman’s correlation between annotators i and j’s scores (si, sj) for

all pairs in the data set, and N is the number of annotators. APIAA has been used widely

as the standard measure for inter-annotator agreement, including in the original SimLex

paper (Hill, Reichart, and Korhonen 2015). It simply averages the pairwise Spearman’s

correlation between all annotators. On the other hand, AMIAA compares the average

Spearman’s correlation of one held-out annotator with the average of all the other N − 1

annotators, and then averages across all N ‘held-out’ annotators. It smooths individual

annotator effects and arguably serves as a better upper bound than APIAA (Gerz et al.

2016; Vuli´c et al. 2017a; Pilehvar et al. 2018, inter alia).

We present the respective APIAA and AMIAA scores in Table 4 and Table 5 for all

part-of-speech subsets, as well as the agreement for the full data sets. As reported in

prior work (Gerz et al. 2016; Vuli´c et al. 2017a), AMIAA scores are typically higher than

APIAA scores. Crucially, the results indicate “strong agreement” (across all languages)

using both measurements. The languages with the highest annotator agreement were

French (FRA) and Yue Chinese (YUE), while Russian (RUS) had the lowest overall IAA

scores. These scores, however, are still considered to be “moderately strong agreement.”

862

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Vuli´c et al.

Multi-SimLex

Table 6

Fine-grained distribution of concept pairs over different rating intervals in each Multi-SimLex

language, reported as percentages. The total number of concept pairs in each data set is 1,888.

Lang:

Interval

[0, 1)

[1, 2)

[2, 3)

[3, 4)

[4, 5)

[5, 6]

CMN CYM ENG

EST

FIN

FRA

HEB

POL

RUS

SPA

SWA

YUE

8.74 19.54 17.06 30.67 21.35 20.39 35.86 17.32 22.40 22.35 11.86

56.99 52.01 50.95 35.01 47.83 17.69 28.07 49.36 50.21 43.96 61.39 57.89

7.84

9.11 11.76

7.10 12.98

6.89

6.30

2.65

4.24

13.72 11.97 12.66 16.21 12.02 22.03 16.74 11.86 11.81 14.83

9.38

11.60

6.78

6.41

2.70

2.54

8.16 10.22 10.17 17.64

5.61 12.55

6.89

9.64

2.97

4.29

8.10

5.88

1.59

8.47

6.62

4.24

8.95

7.57

4.93

8.32

5.83

2.33

6.25

1.64

5.3 Data Analysis

Similarity Score Distributions. Across all languages, the average score (mean = 1.61,

median = 1.1) is on the lower side of the similarity scale. However, looking closer

at the scores of each language in Table 6, we indicate notable differences in both the

averages and the spread of scores. Notably, French has the highest average of similarity

scores (mean = 2.61, median = 2.5), and Kiswahili has the lowest average (mean = 1.28,

median = 0.5). Russian has the lowest spread (σ = 1.37), and Polish has the largest

(σ = 1.62). All of the languages are strongly correlated with each other, as shown in

Figure 1, where all of the Spearman’s correlation coefficients are greater than 0.6 for all

language pairs. Languages that share the same language family are highly correlated

(e.g., CMN-YUE, RUS-POL, EST-FIN). In addition, we observe high correlations between

English and most other languages, as expected. This is due to the effect of using English

as the base/anchor language to create the data set. In simple words, if one translates to

two languages L1 and L2 starting from the same set of pairs in English, it is highly likely

that L1 and L2 will diverge from English in different ways. Therefore, the similarity

between L1-ENG and L2-ENG is expected to be higher than between L1-L2, especially

if L1 and L2 are typologically dissimilar languages (e.g., HEB-CMN, see Figure 1). This

phenomenon is well documented in related prior work (Leviant and Reichart 2015;

Camacho-Collados et al. 2017; Mrkˇsi´c et al. 2017; Vuli´c, Ponzetto, and Glavaˇs 2019).

Although we acknowledge this as an artifact of the data set design, it would otherwise

be impossible to construct a semantically aligned and comprehensive data set across a

large number of languages.

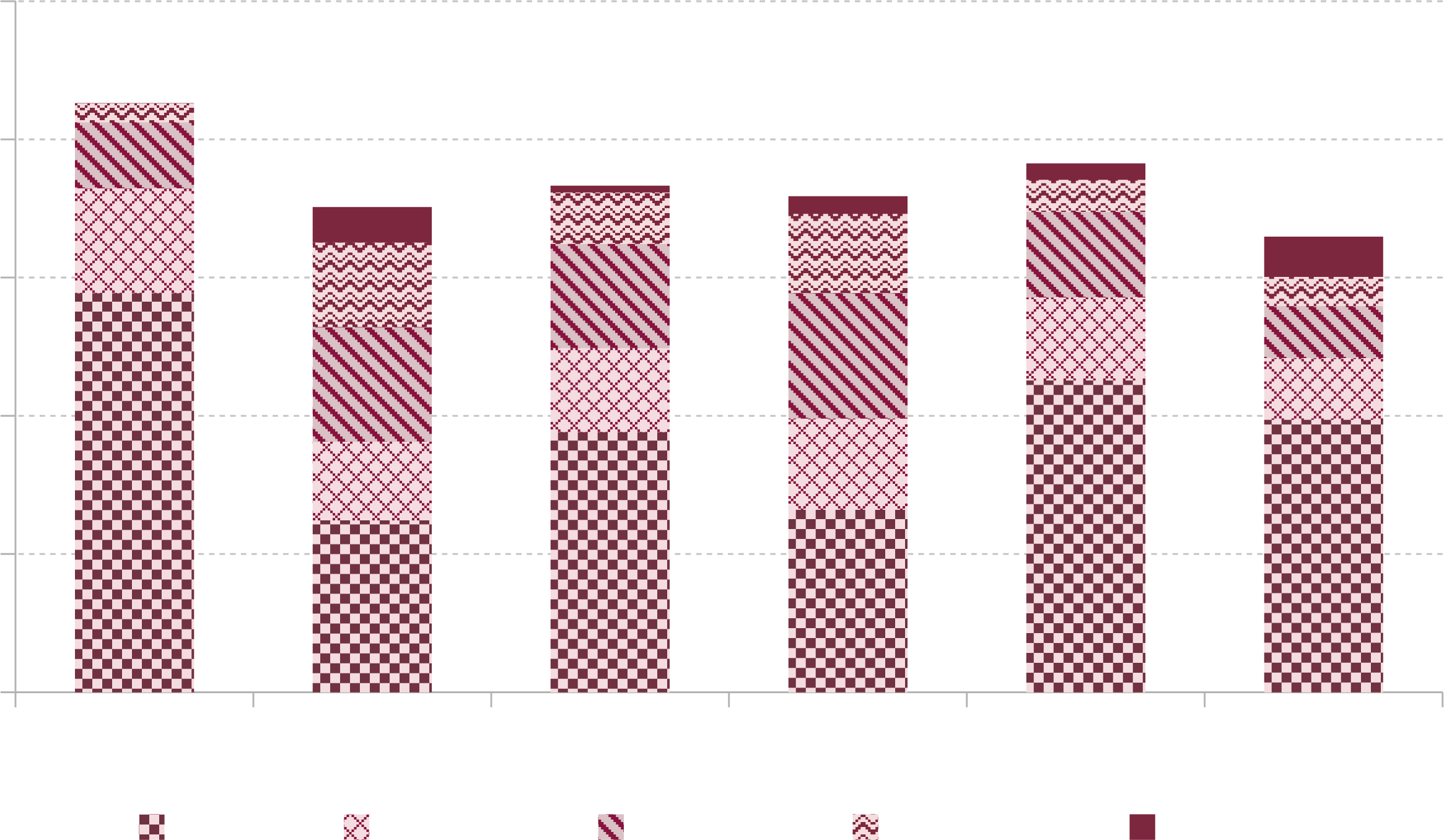

We also report differences in the distribution of the frequency of words among the

languages in Multi-SimLex. Figure 2 shows six example languages, where each bar seg-

ment shows the proportion of words in each language that occur in the given frequency

range. For example, the 10K–20K segment of the bars represents the proportion of words

in the data set that occur in the list of most frequent words between the frequency rank

of 10,000 and 20,000 in that language; likewise with other intervals. Frequency lists for

the presented languages are derived from Wikipedia and Common Crawl corpora.7

Although many concept pairs are direct or approximate translations of English pairs,

we can see that the frequency distribution does vary across different languages, and

7 Frequency lists were obtained from fastText word vectors, which are sorted by frequency:

https://fasttext.cc/docs/en/crawl-vectors.html.

863

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7

1

8

8

8

2

8

7

/

c

o

l

i

_

a

_

0

0

3

9

1

p

d

.

f

b

y

g

u

e

s

t

t

o

n

0

7

S

e

p

e

m

b

e

r

2

0

2

3

Computational Linguistics

Volume 46, Number 4

Figure 1

Spearman’s correlation coefficient (ρ) of the similarity scores for all languages in Multi-SimLex.

l

D

o

w

n

o

a

d

e

d

f

r

o

m

h

t

t

p

:

/

/

d

i

r

e

c

t

.

m

i

t

.

e

d

u

/

c

o

l

i

/

l

a

r

t

i

c

e

–

p

d

f

/

/

/

/

4

6

4

8

4

7