Fixation-related Brain Potentials during Semantic

Integration of Object–Scene Information

Moreno I. Coco1,2, Antje Nuthmann3, and Olaf Dimigen4

Abstrakt

■ In vision science, a particularly controversial topic is whether

and how quickly the semantic information about objects is avail-

able outside foveal vision. Hier, we aimed at contributing to this

debate by coregistering eye movements and EEG while parti-

cipants viewed photographs of indoor scenes that contained a

semantically consistent or inconsistent target object. Linear de-

convolution modeling was used to analyze the ERPs evoked by

scene onset as well as the fixation-related potentials (FRPs) elic-

ited by the fixation on the target object (T) and by the preceding

fixation (t − 1). Object–scene consistency did not influence the

probability of immediate target fixation or the ERP evoked by

scene onset, which suggests that object–scene semantics was

not accessed immediately. Jedoch, during the subsequent

scene exploration, inconsistent objects were prioritized over con-

sistent objects in extrafoveal vision (d.h., looked at earlier) and were

more effortful to process in foveal vision (d.h., looked at longer).

In FRPs, we demonstrate a fixation-related N300/N400 effect,

whereby inconsistent objects elicit a larger frontocentral nega-

tivity than consistent objects. In line with the behavioral findings,

this effect was already seen in FRPs aligned to the pretarget fixa-

tion t − 1 and persisted throughout fixation t, indicating that the

extraction of object semantics can already begin in extrafoveal

vision. Taken together, the results emphasize the usefulness

of combined EEG/eye movement recordings for understanding

the mechanisms of object–scene integration during natural

viewing. ■

EINFÜHRUNG

In our daily activities—for example, when we search for

something in a room—our attention is mostly oriented to

Objekte. The time course of object recognition and the

role of overt attention in this process are therefore topics

of considerable interest in the visual sciences. In the con-

text of real-world scene perception, the question of what

constitutes an object is a more complex question than in-

tuition would suggest (z.B., Wolfe, Alvarez, Rosenholtz,

Kuzmova, & Sherman, 2011). An object is likely a hierar-

chical construct (z.B., Feldman, 2003), with both low-level

Merkmale (z.B., visual saliency) and high-level properties

(z.B., semantics) contributing to its identity. Entsprechend,

when a natural scene is inspected with eye movements,

the observer’s attentional selection is thought to be based

either on objects (z.B., Nuthmann & Henderson, 2010),

image features (saliency; Itti, Koch, & Niebur, 1998), or some

combination of the two (z.B., Stoll, Thrun, Nuthmann, &

Einhäuser, 2015).

An early and uncontroversial finding is that the recog-

nition of objects is mediated by their semantic consis-

tency. Zum Beispiel, an object that the observer would

not expect to occur in a particular scene (z.B., a tooth-

brush in a kitchen) is recognized less accurately (z.B.,

1The University of East London, 2CICPSI, Faculdade de Psicologia,

Universidade de Lisboa, 3Christian-Albrechts-Universität zu Kiel,

4Humboldt-Universität zu Berlin

Fenske, Aminoff, Gronau, & Bar, 2006; Davenport &

Töpfer, 2004; Biederman, 1972) and looked at for longer

than an expected object (z.B., Cornelissen & Võ, 2017;

Henderson, Weeks, & Hollingworth, 1999; De Graef,

Christiaens, & d’Ydewalle, 1990).

What is more controversial, Jedoch, is the exact time

course along which the meaning of an object is processed

and how this semantic processing then influences the

overt allocation of visual attention (see Wu, Wick, &

Pomplun, 2014, für eine Rezension). Two interrelated questions

are at the core of this debate: (1) How much time is needed

to access the meaning of objects after a scene is displayed,

Und (2) Can object semantics be extracted before the ob-

ject is overtly attended, das ist, while the object is still out-

side high-acuity foveal vision (> 1° eccentricity) or even in

the periphery (> 5° eccentricity)?

Evidence that the meaning of not-yet-fixated objects can

capture overt attention comes from experiments that have

used sparse displays of several standalone objects (z.B.,

Cimminella, Della Sala, & Coco, in press; Nuthmann, von

Groot, Huettig, & Olivers, 2019; Belke, Humphreys,

Watson, Meyer, & Telling, 2008; Moores, Laiti, & Chelazzi,

2003). Zum Beispiel, across three different experiments,

Nuthmann et al. found that the very first saccade in the dis-

play was directed more frequently to objects that were

semantically related to a target object rather than to un-

related objects.

Whether such findings generalize to objects embed-

ded in real-world scenes is currently an open research

© 2019 Massachusetts Institute of Technology. Published under a

Creative Commons Attribution 4.0 International (CC BY 4.0) Lizenz.

Zeitschrift für kognitive Neurowissenschaften 32:4, S. 571–589

https://doi.org/10.1162/jocn_a_01504

D

Ö

w

N

l

Ö

A

D

e

D

l

l

/

/

/

/

J

T

T

F

/

ich

T

.

:

/

/

F

R

Ö

M

D

Ö

H

w

T

N

T

P

Ö

:

A

/

D

/

e

D

M

ich

F

R

T

Ö

P

M

R

C

H

.

P

S

ich

l

D

v

ich

R

e

e

R

C

T

C

.

M

H

A

ich

e

R

D

.

u

C

Ö

Ö

M

C

N

/

J

A

Ö

R

T

C

ich

C

N

e

/

–

A

P

R

D

T

ich

3

2

C

l

4

e

5

–

7

P

1

D

F

2

0

/

1

3

3

2

2

/

4

4

7

/

5

Ö

7

C

1

N

/

_

A

1

_

8

0

6

1

1

5

2

0

7

4

6

P

/

D

J

Ö

B

C

j

N

G

_

u

A

e

_

S

0

T

1

Ö

5

N

0

0

4

8

.

P

S

D

e

F

P

e

B

M

j

B

e

G

R

u

2

e

0

S

2

T

3

/

J

/

F

T

.

Ö

N

0

5

M

A

j

2

0

2

1

question. The size of the visual span—that is, the area of

the visual field from which observers can take in useful

Information (see Rayner, 2014, für eine Rezension)—is large

in scene viewing. For object-in-scene search, it corre-

sponded to approximately 8° in each direction from

fixation (Nuthmann, 2013). This opens up the possibility

that both low- and high-level object properties can be

processed outside the fovea. This is clearly the case for

low-level visual features: Objects that are highly salient

(d.h., visually distinct) are preferentially selected for fixation

(z.B., Stoll et al., 2015). If semantic processing also takes

place in extrafoveal vision, then objects that are inconsis-

tent with the scene context (which are also thought to be

more informative; Antes, 1974) should be fixated earlier

in time than consistent ones (Loftus & Mackworth, 1978;

Mackworth & Morandi, 1967).

Jedoch, results from eye-movement studies on this is-

sue have been mixed. A number of studies have indeed re-

ported evidence for an inconsistent object advantage (z.B.,

Borges, Fernandes, & Coco, 2019; LaPointe & Milliken,

2016; Bonitz & Gordon, 2008; Underwood, Templeman,

Lamming, & Foulsham, 2008; Loftus & Mackworth, 1978).

Among these studies, only Loftus and Mackworth (1978)

have reported evidence for immediate extrafoveal atten-

tional capture (d.h., within the first fixation) by object–scene

semantics. In this study, which used relatively sparse line

drawings of scenes, the mean amplitude of the saccade into

the critical object was more than 7°, suggesting that viewers

could process semantic information based on peripheral

information obtained in a single fixation. Im Gegensatz,

other studies have failed to find any advantage for

inconsistent objects in attracting overt attention (z.B.,

Võ & Henderson, 2009, 2011; Henderson et al., 1999;

De Graef et al., 1990). In these experiments, only mea-

sures of foveal processing—such as gaze duration—

were influenced by object–scene consistency, with lon-

ger fixation times on inconsistent than on consistent

Objekte.

Interessant, a similar controversy exists in the lit-

erature on eye guidance in sentence reading. Obwohl

some degree of parafoveal processing during reading is

uncontroversial, it is less clear whether semantic infor-

mation is acquired from the parafovea (Andrews &

Veldre, 2019, für eine Rezension). Most evidence from studies

involving readers of English has been negative (z.B.,

Rayner, Balota, & Pollatsek, 1986), whereas results from

reading German (z.B., Hohenstein & Kliegl, 2014) Und

Chinese (z.B., Yan, Richter, Shu, & Kliegl, 2009) vorschlagen

that parafoveal processing can advance up to the level of

semantic processing.

The processing of object–scene inconsistencies and its

time course have also been investigated in electrophysi-

ological studies (z.B., Mudrik, Lamy, & Deouell, 2010;

Ganis & Kutas, 2003). In ERPs, it is commonly found that

scene-inconsistent objects elicit a larger negative brain re-

sponse compared with consistent ones. This long-lasting

negative shift typically starts as early as 200–250 msec

after stimulus onset (z.B., Draschkow, Heikel, Võ, Fiebach,

& Sassenhagen, 2018; Mudrik, Shalgi, Lamy, & Deouell,

2014) and has its maximum at frontocentral scalp sites,

in contrast to the centroparietal N400 effect for words

(z.B., Kutas & Federmeier, 2011). The effect was found

for objects that appeared at a cued location after the

scene background was already shown (Ganis & Kutas,

2003), for objects that were photoshopped into the

scene (Coco, Araujo, & Petersson, 2017; Mudrik et al.,

2010, 2014), and for objects that were part of realistic

photographs ( Võ & Wolfe, 2013). These ERP effects of

object–scene consistency have typically been subdivided

into two distinct components: N300 and N400. The earlier

part of the negative response, usually referred to as N300,

has been taken to reflect the context-dependent difficulty

of object identification, whereas the later N400 has been

linked to semantic integration processes after the object is

identified (z.B., Dyck & Brodeur, 2015). The present study

was not designed to differentiate between these two sub-

components, especially considering that their scalp distri-

bution is strongly overlapping or even topographically

indistinguishable (Draschkow et al., 2018). Daher, for rea-

sons of simplicity, we will in most cases simply refer to

all frontocentral negativities as “N400.”

One limiting factor of existing ERP studies is that the

data were gathered using steady-fixation paradigms in

which the free exploration of the scene through eye

movements was not permitted. Stattdessen, the critical object

was typically large and/or located relatively close to the

center of the screen, and ERPs were time-locked to the

onset of the image (z.B., Mudrik et al., 2010). Weil

of these limitations, it remains unclear whether foveation

of the object is a necessary condition for processing

object–scene consistencies or whether such processing

can at least begin in extrafoveal vision.

In the current study, we used fixation-related po-

tentials (FRPs), das ist, EEG waveforms aligned to fixation

onset, to shed new light on the controversial findings of

the role of foveal versus extrafoveal vision in extracting

object semantics, while providing insights into the

patterns of brain activity that underlie them (for reviews

about FRPs, see Nikolaev, Meghanathan, & van Leeuwen,

2016; Dimigen, Sommer, Hohlfeld, Jacobs, & Kliegl, 2011).

FRPs have been used to investigate the brain-electric

correlates of natural reading, as opposed to serial word

presentation, helping researchers to provide finer details

about the online processing of linguistic features (wie zum Beispiel

word predictability; Kliegl, Dambacher, Dimigen, Jacobs,

& Sommer, 2012; Kretzschmar, Bornkessel-Schlesewsky,

& Schlesewsky, 2009) or the dynamics of the perceptual

span during reading (z.B., parafovea-on-fovea effects;

Niefind & Dimigen, 2016). More recently, the coregistra-

tion method has also been applied to investigate active

visual search (z.B., Ušćumlić & Blankertz, 2016; Devillez,

Guyader, & Guérin-Dugué, 2015; Kaunitz et al., 2014;

Brouwer, Reuderink, Vincent, van Gerven, & van Erp, 2013;

Kamienkowski, Ison, Quiroga, & Sigman, 2012), Objekt

572

Zeitschrift für kognitive Neurowissenschaften

Volumen 32, Nummer 4

D

Ö

w

N

l

Ö

A

D

e

D

l

l

/

/

/

/

J

T

T

F

/

ich

T

.

:

/

/

F

R

Ö

M

D

Ö

H

w

T

N

T

P

Ö

:

A

/

D

/

e

D

M

ich

F

R

T

Ö

P

M

R

C

H

.

P

S

ich

l

D

v

ich

R

e

e

R

C

T

C

.

M

H

A

ich

e

R

D

.

u

C

Ö

Ö

M

C

N

/

J

A

Ö

R

T

C

ich

C

N

e

/

–

A

P

R

D

T

ich

3

2

C

l

4

e

5

–

7

P

1

D

F

2

0

/

1

3

3

2

2

/

4

4

7

/

5

Ö

7

C

1

N

/

_

A

1

_

8

0

6

1

1

5

2

0

7

4

6

P

/

D

J

Ö

B

C

j

N

G

_

u

A

e

_

S

0

T

1

Ö

5

N

0

0

4

8

.

P

S

D

e

F

P

e

B

M

j

B

e

G

R

u

2

e

0

S

2

T

3

/

J

.

F

T

/

Ö

N

0

5

M

A

j

2

0

2

1

identification (Rämä & Baccino, 2010), and affective process-

ing in natural scene viewing (Simola, Le Fevre, Torniainen, &

Baccino, 2015).

In this study, we simultaneously recorded eye move-

ments and FRPs during the viewing of real-world scenes

to distinguish between three alternative hypotheses on

object–scene integration that can be derived from the lit-

erature: (A) One glance of the scene is sufficient to extract

object semantics from extrafoveal vision (z.B., Loftus &

Mackworth, 1978), (B) extrafoveal processing of object–

scene semantics is possible but takes some time to unfold

(z.B., Bonitz & Gordon, 2008; Underwood et al., 2008),

Und (C) the processing of object semantics requires foveal

vision, das ist, a direct fixation of the critical object (z.B., Võ &

Henderson, 2009; Henderson et al., 1999; De Graef et al.,

1990). We note that these possibilities are not mutually

exclusive, an issue we elaborate on in the Discussion section.

For the behavioral data, these hypotheses translate as

follows: under Hypothesis A, the probability of immedi-

ate target fixation should reveal that already the first

saccade on the scene goes more often toward inconsis-

tent than consistent objects. Under Hypothesis B, Dort

should be no effect on the first eye movement, aber die

latency to first fixation on the critical object should be shorter

for inconsistent than consistent objects. Under Hypothesis

C, only fixation times on the critical object itself should differ

as a function of object–scene consistency, with longer gaze

durations on inconsistent objects.

For the electrophysiological data analysis, we used a novel

regression-based analysis approach (linear deconvolution

modeling; Cornelissen, Sassenhagen, & Võ, 2019; Dimigen

& Ehinger, 2019; Ehinger & Dimigen, 2019; Kristensen, Rivet,

& Guérin-Dugué, 2017; Schmied & Kutas, 2015B; Dandekar,

Privitera, Carney, & Klein, 2012), which allowed us to con-

trol for the confounding influences of overlapping poten-

tials and oculomotor covariates on the neural responses

during natural viewing. In the EEG, Hypothesis A can be

tested by computing the ERP time-locked to the onset of

the scene on the display, following the traditional ap-

proach. Given that the critical objects in our study were

not placed directly in the center of the screen from which

observers started their exploration of the scene, any effect

of object–scene congruency in this ERP would suggest that

object semantics is rapidly processed in extrafoveal vision,

even before the first eye movement is generated, in line

with Loftus and Mackworth (1978). Under Hypothesis B,

we would not expect to see an effect in the scene-onset

ERP. Stattdessen, we should find a negative brain potential

(N400) for inconsistent as compared with consistent ob-

jects in the FRP aligned to the fixation that precedes the

one that first lands on the critical object. Endlich, Wenn

Hypothesis C is correct, an N400 for inconsistent objects

should only arise once the critical object is foveated, Das

Ist, in the FRP aligned to the target fixation (fixation t). In con-

trast, no consistency effects should appear in the scene-

onset ERP or in the FRP aligned to the pretarget fixation

(fixation t − 1). To preview the results, both the eye move-

ment and the EEG data lend support for Hypothesis B.

METHODEN

Design and Task Overview

We designed a short-term visual working memory change

detection task, illustrated in Figures 1 Und 2. Während der

study phase, participants were exposed to photographs

D

Ö

w

N

l

Ö

A

D

e

D

l

l

/

/

/

/

J

T

T

F

/

ich

T

.

:

/

/

F

R

Ö

M

D

Ö

H

w

T

N

T

P

Ö

:

A

/

D

/

e

D

M

ich

F

R

T

Ö

P

M

R

C

H

.

P

S

ich

l

D

v

ich

R

e

e

R

C

T

C

.

M

H

A

ich

e

R

D

.

u

C

Ö

Ö

M

C

N

/

J

A

Ö

R

T

C

ich

C

N

e

/

–

A

P

R

D

T

ich

3

2

C

l

4

e

5

–

7

P

1

D

F

2

0

/

1

3

3

2

2

/

4

4

7

/

5

Ö

7

C

1

N

/

_

A

1

_

8

0

6

1

1

5

2

0

7

4

6

P

/

D

J

Ö

B

C

j

N

G

_

u

A

e

_

S

0

T

1

Ö

5

N

0

0

4

8

.

P

S

D

e

F

P

e

B

M

j

B

e

G

R

u

2

e

0

S

2

T

3

/

J

T

F

.

/

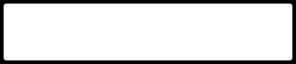

Figur 1. Example stimuli and

conditions in the study.

Participants viewed photographs

of indoor scenes that contained a

target object (highlighted with a

red circle) that was either

semantically consistent (Hier,

toothpaste) or semantically

inconsistent (Hier, flashlight)

with the context of the scene.

The target object could be

placed at different locations

within the scene, on either the

left or right side. The example

gaze path plotted on the right

illustrates the three types of

fixations analyzed in the study:

(A) t – 1, the fixation preceding

the first fixation to the target

Objekt; (B) T, the first fixation to

the target; Und (C) nt, all other

(nontarget) fixations. Fixation

duration is proportional

to the diameter of the circle,

which is red for the critical

fixations and black for the

nontarget fixations.

Ö

N

0

5

M

A

j

2

0

2

1

Coco, Nuthmann, and Dimigen

573

additional two participants were recorded but removed

from the analysis because of excessive scalp muscle

(EMG) activity or skin potentials in the raw EEG. Ethics

approval was obtained from the Psychology Research

Ethics Committee of the University of Edinburgh.

Apparatus and Recording

Scenes were presented on a 19-in. CRT monitor (Iiyama

Vision Master Pro 454) at a vertical refresh rate of 75 Hz.

At the viewing distance of 60 cm, each scene subtended

35.8° × 26.9° (width × height). Eye movements were re-

corded monocularly from the dominant eye using an SR

Research EyeLink 1000 desktop-mounted system at a

sampling rate of 1000 Hz. Eye dominance for each par-

ticipant was determined with a parallax test. A chin-and-

forehead rest was used to stabilize the participant’s head.

Nine-point calibrations were run at the beginning of each

session and whenever the participant’s fixation deviated

by > 0.5° horizontally or > 1° vertically from a drift cor-

rection point presented at trial onset.

The EEG was recorded from 64 active electrodes at a

sampling rate of 512 Hz using BioSemi ActiveTwo am-

plifiers. Four electrodes, located near the left and right

canthus and above and below the right eye, recorded the

EOG. All channels were referenced against the BioSemi

common mode sense (active electrode) and grounded

to a passive electrode. The BioSemi hardware is DC

coupled and applies digital low-pass filtering through

the A/D-converter’s decimation filter, which has a fifth-order

sinc response with a −3 dB point at one fifth of the sample

rate (corresponding approximately to a 100-Hz low-

pass filter).

Offline, the EEG was rereferenced to the average of all

scalp electrodes and filtered using EEGLAB’s (Delorme &

Makeig, 2004) Hamming-windowed sinc finite impulse

response filter (pop_eegfiltnew.m) with default settings.

The lower edge of the filter’s passband was set to 0.2 Hz

(with −6 dB attenuation at 0.1 Hz); and the upper edge,

Zu 30 Hz (with −6 dB attenuation at 33.75 Hz). Eye track-

ing and EEG data were synchronized using shared triggers

sent via the parallel port of the stimulus presentation PC to

the two recording computers. Synchronization was per-

formed offline using the EYE-EEG extension (v0.8) für

EEGLAB (Dimigen et al., 2011). All data sets were aligned

with a mean synchronization error ≤ 2 msec as computed

based on trigger alignment after synchronization.

Materials and Rating

Stimuli consisted of 192 color photographs of indoor

scenes (z.B., bedrooms, bathrooms, offices). Real target

objects were placed in the physical scene, before each

picture was taken with a tripod under controlled lighting

conditions and with a fixed aperture (d.h., there was no

photo-editing). One scene is shown in Figure 1; minia-

ture versions of all stimuli used in this study are found

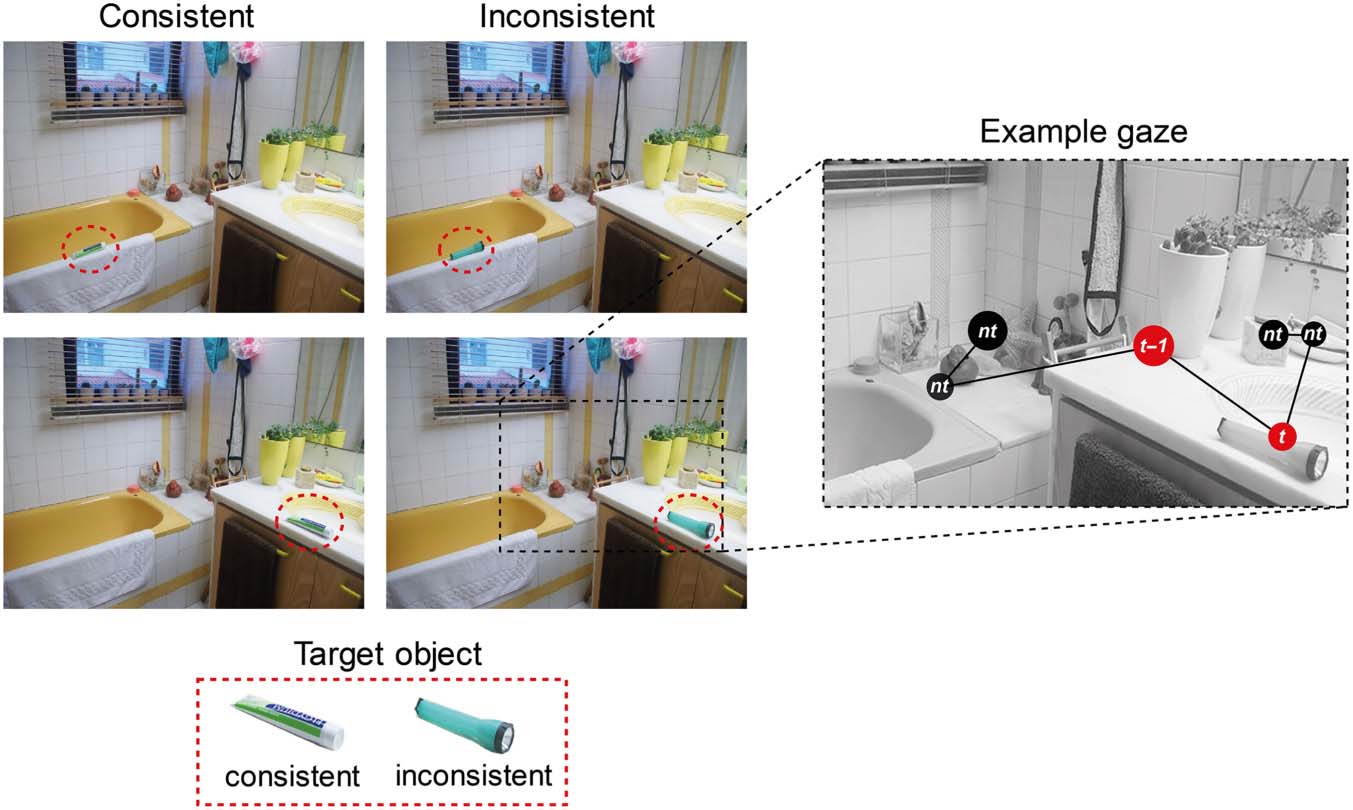

Figur 2. Trial scheme. After a drift correction, the study scene

appeared. The display duration of the scene was controlled by a

gaze-contingent mechanism, and it disappeared, on average, 2000 ms

after the target object was fixated. In the following retention interval,

only a fixation cross was presented. During the recognition phase,

the scene was presented again until participants pressed a button to

indicate whether or not a change had occurred within the scene. Alle

analyses in the present article focus on eye-movement and EEG data

collected during the study phase.

of indoor scenes (z.B., a bathroom), each of which con-

tained a target object that was either semantically consis-

tent (z.B., toothpaste) or inconsistent (z.B., a flashlight)

with the scene context. In the following recognition

Phase, after a short retention interval of 900 ms, Die

same scene was shown again, but in half of the trials,

either the identity, the location, or both the identity and

location of the target object had changed relative to the

study phase.

The participants’ task was to indicate with a keyboard

press whether or not a change had happened to the

scene (see also LaPointe & Milliken, 2016). All eye-

movement and EEG analyses in the present article focus

on the semantic consistency manipulation of the target

object during the study phase.

Teilnehmer

Twenty-four participants (nine men) zwischen dem Alter von

18 Und 33 Jahre (M = 25.0 Jahre) took part in the exper-

iment after providing written informed consent. Sie

were compensated with £7 per hour. All participants

had normal or corrected-to-normal vision. Data from an

574

Zeitschrift für kognitive Neurowissenschaften

Volumen 32, Nummer 4

D

Ö

w

N

l

Ö

A

D

e

D

l

l

/

/

/

/

J

T

T

F

/

ich

T

.

:

/

/

F

R

Ö

M

D

Ö

H

w

T

N

T

P

Ö

:

A

/

D

/

e

D

M

ich

F

R

T

Ö

P

M

R

C

H

.

P

S

ich

l

D

v

ich

R

e

e

R

C

T

C

.

M

H

A

ich

e

R

D

.

u

C

Ö

Ö

M

C

N

/

J

A

Ö

R

T

C

ich

C

N

e

/

–

A

P

R

D

T

ich

3

2

C

l

4

e

5

–

7

P

1

D

F

2

0

/

1

3

3

2

2

/

4

4

7

/

5

Ö

7

C

1

N

/

_

A

1

_

8

0

6

1

1

5

2

0

7

4

6

P

/

D

J

Ö

B

C

j

N

G

_

u

A

e

_

S

0

T

1

Ö

5

N

0

0

4

8

.

P

S

D

e

F

P

e

B

M

j

B

e

G

R

u

2

e

0

S

2

T

3

/

J

F

T

/

.

Ö

N

0

5

M

A

j

2

0

2

1

online at https://osf.io/sjprh/. Of the 192 scenes, 96 war

conceived as change items and 96 were conceived as no-

change items. Each one of the 96 change scenes was created

in four versions. Insbesondere, the scene (z.B., a bathroom)

was photographed with two alternative target objects in

Es, one that was consistent with the scene context (z.B., A

toothbrush) and one that was not (z.B., a flashlight).

Darüber hinaus, each of these two objects was placed at two

alternative locations (left or right side) within the scene

(z.B., either on the sink or on the bathtub). Entsprechend,

three types of change were implemented during the rec-

ognition phase (Congruency, Location, and Both; sehen

Procedure section below).

Each of the 96 no-change scenes was also a real pho-

tograph with either a consistent or an inconsistent object

in it, which was again located in either the left or right

half of the scene. Across the 96 no-change scenes, Die

factors consistency (consistent vs. inconsistent objects)

and location ( left and right) were also balanced.

Jedoch, each no-change scene was unique; das ist, Wir

did not create four different versions of each no-change

scene. The data of the 96 no-change scenes, die Waren

originally conceived to be filler trials, were included to

improve the signal-to-noise ratio of the EEG analyses,

as these scenes also had a balanced distribution of incon-

sistent and consistent objects.

Wie oben erklärt, scenes contained a critical object

that was either consistent or inconsistent with the scene

Kontext. Object consistency was assessed in a pretest rat-

ing study by eight naive participants who were not in-

volved in any other aspect of the study. Each participant

rated all of the no-change scenes as well as one of the four

versions of each change-scene (counterbalanced across

raters). Together with the scene, raters saw a box with

a cropped image of the critical object. They were asked

(A) to write down the name for the displayed object and

(B) to respond to the question “How likely is it that this

object would be found in this room?” using a 6-point

Likert scale (1–6). For the object naming, a mean naming

agreement of 96.35% was obtained. Außerdem, konsis-

tent objects were judged as significantly more likely (M =

5.78, SD = 0.57) to appear in the scene than inconsistent

Objekte (M = 1.88, SD = 1.11), wie von einem bestätigt

independent-samples Kruskal–Wallis H test, χ2(1) =

616.09, P < .001.

In addition, we ensured that there was no difference be-

tween consistent and inconsistent objects on three impor-

tant low-level variables: object size (pixels square), distance

from the center of the scene (degrees of visual angle),

and mean visual saliency of the object as computed using

the Adaptive Whitening Saliency model (Garcia-Diaz,

Fdez-Vidal, Pardo, & Dosil, 2012). Table 1 provides addi-

tional information about the target object. Independent t

tests showed no significant difference between inconsis-

tent and consistent objects in size, t(476) = −1.27, p =

.2; visual saliency, t(476) = 0.82, p = .41; and distance

from the center, t(476) = −1.75, p = .08.

The position of each target object was marked with an

invisible rectangular bounding box, which was used to im-

plement the gaze contingency mechanism (described in

the Procedure section below) and to determine whether

a fixation was inside the target object. The average width

of the bounding box was 6.1° ± 2.0° for consistent objects

and 6.1° ± 2.1° for inconsistent objects (see Table 1); the

average height was 5.1° ± 1.8° and/or 5.4° ± 2.2°, respec-

tively. The average distance of the object centroid from

the center of the scene was 12.1° (± 2.8°) for consistent

and 11.7° (± 3.0°) for inconsistent objects.

Procedure

A schematic representation of the task is shown in

Figure 2. Each trial started with a drift correction of the

eye tracker. Afterward, the study scene was presented

(e.g., a bathroom). The display duration of the study

scene was controlled by a gaze-contingent mechanism

that ensured that participants fixated the target object

(e.g., toothbrush or flashlight) at least once during the

trial. Specifically, the study scene disappeared, on aver-

age, 2000 msec (with a random jitter of ± 200 msec,

drawn from a uniform distribution) after the participant’s

eyes left the invisible bounding box of the target object

(and provided that the target had been fixated for at least

150 msec). The jittered delay of about 2000 msec was im-

plemented to prevent participants from learning to asso-

ciate the last fixated object during the study phase with

the changed object during the recognition phase. If the

participant did not fixate the target object within 10 sec,

the study scene disappeared from the screen and the re-

tention interval was triggered, which lasted for 900 msec.

In the following recognition phase (data not analyzed

here), the scene was presented again, either with (50% of

trials) or without (50% of trials) a change to an object in

the scene. Three types of object changes occurred with

equal probability: Location, Consistency, or Both. In the

(a) Location condition, the target object changed its po-

sition and moved either from left to right or from right to

left to another plausible location within the scene (e.g., a

toothbrush was placed elsewhere within the bathroom

scene). In the (b) Consistency condition, the object re-

mained in the same location but was replaced with an-

other object of opposite semantic consistency (e.g., the

toothbrush was replaced by a flashlight). Finally, in the

(c) Both condition, the object was both replaced and

moved within the scene (e.g., a toothbrush was replaced

by a flashlight at a different location).

During the recognition phase, participants had to indi-

cate whether they noticed any kind of change within the

scene by pressing the arrow keys on the keyboard.

Afterward, the scene disappeared, and the next trial be-

gan. If participants did not respond within 10 sec, a miss-

ing response was recorded.

The type of change between trials was fully counterba-

lanced using a Latin Square rotation. Specifically, the 96

Coco, Nuthmann, and Dimigen

575

D

o

w

n

l

o

a

d

e

d

l

l

/

/

/

/

j

f

/

t

t

i

t

.

:

/

/

f

r

o

m

D

o

h

w

t

n

t

p

o

:

a

/

d

/

e

d

m

i

f

r

t

o

p

m

r

c

h

.

p

s

i

l

d

v

i

r

e

e

r

c

t

c

.

m

h

a

i

e

r

d

.

u

c

o

o

m

c

n

/

j

a

o

r

t

c

i

c

n

e

/

-

a

p

r

d

t

i

3

2

c

l

4

e

5

-

7

p

1

d

f

2

0

/

1

3

3

2

2

/

4

4

7

/

5

o

7

c

1

n

/

_

a

1

_

8

0

6

1

1

5

2

0

7

4

6

p

/

d

j

o

b

c

y

n

g

_

u

a

e

_

s

0

t

1

o

5

n

0

0

4

8

.

p

S

d

e

f

p

e

b

m

y

b

e

g

r

u

2

e

0

s

2

t

3

/

j

t

/

.

f

o

n

0

5

M

a

y

2

0

2

1

Table 1. Eye Movement Behavior in the Task and Properties of the Target Object

Eye movement behavior

Ordinal fixation number of first target fixation

6.7 ± 6.0

5.2 ± 5.3

Consistent

Inconsistent

Mean ± SD

Mean ± SD

Fixation duration (t − 2), in msec

Fixation duration (t − 1), in msec

Fixation duration (t), in msec

Gaze duration on target, in msec

Number of refixations on target

220.7 ± 105

212.9 ± 95

207.6 ± 96

197 ± 91

261.6 ± 146

263.3 ± 136

408.5 ± 367.1

519.1 ± 373.6

1.7 ± 2

2.2 ± 2.1

Duration of refixations on target, in msec

238.9 ± 121.8

250.2 ± 135.7

Fixation duration (t + 1), in msec

Incoming saccade amplitude to t − 1 (°)

Incoming saccade amplitude to t (°)

Incoming saccade amplitude to t + 1 (°)

Distance of fixation t − 1 from the closest

edge of target (°)

Number of fixations after first encountering

target object until end of study phase

Duration of fixations after first encountering

target object (until end of study phase)

245.3 ± 148

243.7 ± 146

6.1 ± 5.2

8.5 ± 5.2

9.5 ± 5.9

6.8 ± 5.8

6 ± 4.8

8.3 ± 4.8

10.2 ± 5.8

6.3 ± 5.3

7.3 ± 2.1

7.3 ± 1.7

254.6 ± 120.4

251.7 ± 118.8

Target object properties

Distance of target object center from screen center (°)

12.1 ± 2.8

11.7 ± 3

Mean visual saliency (AWS model)

0.36 ± 0.16

0.37 ± 0.16

Width (°)

Height (°)

6.1 ± 2

5.1 ± 1.8

6.1 ± 2.1

5.4 ± 2.2

Area (degrees of visual angle squared)

16.1 ± 8.7

17.3 ± 11.4

Target object size and distance to target are based on the bounding box around the object. The fixation t + 1 is the first fixation after leaving the

bounding box of the target object.

change trials were distributed across 12 different lists, im-

plementing the different types of change. This implies

that each participant was exposed to an equal number

of consistent and inconsistent change trials. The 96 no-

change trials also were composed of an equal number

of consistent and inconsistent scenes and were the same

for each participant. During the experiment, all 192 trials

were presented in a randomized order. They were pre-

ceded by four practice trials at the start of the session.

Written instructions were given to explain the task, which

took 20–40 min to complete. The experiment was im-

plemented using the SR Research Experiment Builder

software.

Data Preprocessing

Eye-movement Events and Data Exclusion

Fixations and saccade events were extracted from the raw

gaze data using the SR Research Data Viewer software,

which performs saccade detection based on velocity and

acceleration thresholds of 30° sec−1 and 9500° sec−2,

respectively. To provide directly comparable results for

eye-movement behavior and FRP analyses, we discarded

all trials on which we did not have clean data from both re-

cordings. Specifically, from 4608 trials (24 participants × 192

trials), we excluded 10 trials (0.2%) because of machine

error (i.e., no data were recorded for those trials), 689 tri-

als (15.0%) because the participant responded incorrectly

after the recognition phase, and 494 trials (10.7%) be-

cause the target object was not fixated during the study

phase. Finally, we removed an additional 97 trials (2.1%)

for which the target fixation overlapped with intervals of

the EEG that contained nonocular artifacts (see below).

The final data set for the behavioral and FRP analyses

therefore was composed of 3318 unique trials: 1567 for

the consistent condition and 1751 for the inconsistent

condition. Per participant, this corresponded to an aver-

age of 65.3 trials (± 6.9, range = 48–78) for consistent

and 73.0 trials (± 6.9, range = 59–82) for inconsistent

576

Journal of Cognitive Neuroscience

Volume 32, Number 4

D

o

w

n

l

o

a

d

e

d

l

l

/

/

/

/

j

f

/

t

t

i

t

.

:

/

/

f

r

o

m

D

o

h

w

t

n

t

p

o

:

a

/

d

/

e

d

m

i

f

r

t

o

p

m

r

c

h

.

p

s

i

l

d

v

i

r

e

e

r

c

t

c

.

m

h

a

i

e

r

d

.

u

c

o

o

m

c

n

/

j

a

o

r

t

c

i

c

n

e

/

-

a

p

r

d

t

i

3

2

c

l

4

e

5

-

7

p

1

d

f

2

0

/

1

3

3

2

2

/

4

4

7

/

5

o

7

c

1

n

/

_

a

1

_

8

0

6

1

1

5

2

0

7

4

6

p

/

d

j

o

b

c

y

n

g

_

u

a

e

_

s

0

t

1

o

5

n

0

0

4

8

.

p

S

d

e

f

p

e

b

m

y

b

e

g

r

u

2

e

0

s

2

t

3

/

j

f

t

/

.

o

n

0

5

M

a

y

2

0

2

1

items. Because of the fixation check, participants were al-

ways fixating at the screen center when the scene appeared

on the display. This ongoing central fixation was removed

from all analyses.

EEG Ocular Artifact Correction

EEG recordings during free viewing are contaminated by

three types of ocular artifacts (Plöchl, Ossandón, & König,

2012) that need to be removed to get at the genuine brain

activity. Here, we applied an optimized variant (Dimigen,

2020) of independent component analysis (ICA; Jung

et al., 1998), which uses the information provided by the

eye tracker to objectively identify ocular ICA components

(Plöchl et al., 2012).

In a first step, we created optimized ICA training data

by high-pass filtering a copy of the EEG at 2 Hz (Dimigen,

2020; Winkler, Debener, Müller, & Tangermann, 2015)

and segmenting it into epochs lasting from scene onset

until 3 sec thereafter. These high-pass-filtered training

data were entered into an extended Infomax ICA using

EEGLAB, and the resulting unmixing weights were then

transferred to the original (i.e., less strictly filtered) re-

cording (Debener, Thorne, Schneider, & Viola, 2010).

From this original EEG data set, we then removed all inde-

pendent components whose time course varied more

strongly during saccade intervals (defined as lasting from

−20 msec before saccade onset until 20 msec after

saccade offset) than during fixations, with the threshold

for the variance ratio (saccade/fixation; see Plöchl et al.,

2012) set to 1.3. Finally, the artifact-corrected continuous

EEG was back-projected to the sensor space. For a valida-

tion of the ICA procedure, please refer to Supplementary

Figure S1.

In a next step, intervals with residual nonocular ar-

tifacts (e.g., EMG bursts) were detected by shifting a

2000-msec moving window in steps of 100 msec across

the continuous recording. Whenever the voltages within

the window exceeded a peak-to-peak threshold of 100 μV

in at least one of the channels, all data within the window

were marked as “bad” and subsequently excluded from

analysis. Within the linear deconvolution framework

(see below), this can easily be done by setting all predic-

tors to zero during these bad EEG intervals (Smith &

Kutas, 2015b), meaning that the data in these intervals

will not affect the computation.

Analysis

Eye-movement Data

Dependent measures. Behavioral analyses focused on

four eye-movement measures commonly reported in

the semantic consistency literature: (a) the cumulative

probability of having fixated the target object as a func-

tion of the ordinal fixation number, (b) the probability

of immediate object fixation, (c) the latency to first

fixation on the target object, and (d) the gaze duration

on the target object (cf. Võ & Henderson, 2009).

Linear mixed-effects modeling. Eye-movement data

were analyzed using linear mixed-effects models

(LMMs) and generalized LMMs (GLMM) as implemented

in the lme4 package in R (Bates, Mächler, Bolker, &

Walker, 2015). The only exception was the cumulative

probability of first fixations on the target for which a gen-

eralized linear model (GLM) was used. One advantage of

(G)LMM modeling is that it allows one to simultaneously

model the intrinsic variability of both participants and

scenes (e.g., Nuthmann & Einhäuser, 2015).

In all analyses, the main predictor was the consistency

of the critical object (contrast coding: consistent = −0.5,

inconsistent = 0.5) in the study scene. In the (G)LMMs,

Participant (24) and Scene (192) were included as ran-

dom intercepts.1 The cumulative probability of object fix-

ation was analyzed using a GLM with a binomial (probit)

link. This model included the Ordinal Number of Fixation

on the scene as a predictor; it was entered as a continu-

ous variable ranging from 1 to a maximum of 28 (the 99th

quantile).

In the tables of results, we report the beta coefficients,

t values (LMM), z values (GLMM), and p values for each

model. For LMMs, the level of significance was calculated

from an F test based on the Satterthwaite approximation

to the effective degrees of freedom (Satterthwaite, 1946),

whereas p values in GLMMs are based on asymptotic

Wald tests.

Electrophysiological Data

Linear deconvolution modeling (first level of analysis).

EEG measurements during active vision are associated

with two major methodological problems: overlapping

potentials and low-level signal variability (Dimigen &

Ehinger, 2019). Overlapping potentials arise from the rapid

pace of active information sampling through eye move-

ments, which causes the neural responses that are evoked

by subsequent fixations on the stimulus to overlap with

each other. Because the average fixation duration usually

varies between conditions, this changing overlap can eas-

ily confound the measured waveforms. A related issue is

the mutual overlap between the ERP elicited by the initial

presentation of the stimulus and the FRPs evoked by the

subsequent fixations on it. This second type of overlap is

especially important in experiments like ours, in which

the critical fixations occurred at different latencies after

scene onset in the two experimental conditions.

The problem of signal variability refers to the fact that

low-level visual and oculomotor variables can also influence

the morphology of the predominantly visually evoked

fixation-related neural responses (e.g., Kristensen et al.,

2017; Nikolaev et al., 2016; Dimigen et al., 2011). The most

relevant of these variables, which is known to modulate

the entire FRP waveform, is the amplitude of the saccade

Coco, Nuthmann, and Dimigen

577

D

o

w

n

l

o

a

d

e

d

l

l

/

/

/

/

j

f

/

t

t

i

t

.

:

/

/

f

r

o

m

D

o

h

w

t

n

t

p

o

:

a

/

d

/

e

d

m

i

f

r

t

o

p

m

r

c

h

.

p

s

i

l

d

v

i

r

e

e

r

c

t

c

.

m

h

a

i

e

r

d

.

u

c

o

o

m

c

n

/

j

a

o

r

t

c

i

c

n

e

/

-

a

p

r

d

t

i

3

2

c

l

4

e

5

-

7

p

1

d

f

2

0

/

1

3

3

2

2

/

4

4

7

/

5

o

7

c

1

n

/

_

a

1

_

8

0

6

1

1

5

2

0

7

4

6

p

/

d

j

o

b

c

y

n

g

_

u

a

e

_

s

0

t

1

o

5

n

0

0

4

8

.

p

S

d

e

f

p

e

b

m

y

b

e

g

r

u

2

e

0

s

2

t

3

/

j

t

f

/

.

o

n

0

5

M

a

y

2

0

2

1

that precedes fixation onset (e.g., Dandekar et al., 2012;

Thickbroom, Knezevič, Carroll, & Mastaglia, 1991). One

option for controlling the effect of saccade amplitude is

to include it as a continuous covariate in a massive uni-

variate regression model (Smith & Kutas, 2015a, 2015b),

in which a separate regression model is computed for

each EEG time point and channel ( Weiss, Knakker, &

Vidnyánszky, 2016; Hauk, Davis, Ford, Pulvermüller, &

Marslen-Wilson, 2006). However, this method does not

account for overlapping potentials.

An approach that allows one to simultaneously control

for overlapping potentials and low-level covariates is de-

convolution within the linear model (for tutorial reviews,

see Dimigen & Ehinger, 2019; Smith & Kutas, 2015a,

2015b), sometimes also called “continuous-time regres-

sion” (Smith & Kutas, 2015b). Initially developed to sepa-

rate overlapping BOLD responses (e.g., Serences, 2004),

linear deconvolution has also been applied to separate

overlapping potentials in ERP (Smith & Kutas, 2015b) and

FRP (Cornelissen et al., 2019; Ehinger & Dimigen, 2019;

Kristensen et al., 2017; Dandekar et al., 2012) paradigms.

Another elegant property of this approach is that the

ERPs elicited by scene onset and the FRPs elicited by

fixations on the scene can be disentangled and simulta-

neously estimated in the same regression model. The ben-

efits of deconvolution are illustrated in more detail in

Supplementary Figures S2 and S3.

Here, we applied this technique by using the new

unfold toolbox (Ehinger & Dimigen, 2019), which repre-

sents the first-level analysis and provides us with the par-

tial effects (i.e., the beta coefficients or “regression ERPs”;

Smith & Kutas, 2015a, 2015b) for each predictor of inter-

est. In a first step, both stimulus onset events and fixation

onset events were included as stick functions (also called

“finite impulse responses”) in the design matrix of the re-

gression model. To account for overlapping activity from

adjacent experimental events, the design matrix was then

time-expanded in a time window between −300 and

+800 msec around each stimulus and fixation onset

event. Time expansion means that the time points within

this window are added as predictors to the regression

model. Because the temporal distance between subse-

quent events in the experiment is variable, it is possible

to disentangle their overlapping responses. Time expan-

sion with stick functions is explained in Serences (2004)

and Ehinger and Dimigen (2019; see their Figure 2).

The model was run on EEG data sampled at the original

512 Hz; that is, no down-sampling was performed.

Using Wilkinson notation, the model formula for scene

onset events was defined as

ERP ∼ 1 þ Consistency

In this formula, the beta coefficients for the intercept (1)

capture the shape of the overall waveform of the stimulus

ERP in the consistent condition, which was used as the

reference level, whereas those for Consistency capture

the differential effect of presenting an inconsistent object

in the scene (relative to a consistent object) on the ERP.

The coefficients for the predictor Consistency are there-

fore analogous to a difference waveform in a traditional

ERP analysis (Smith & Kutas, 2015a, 2015b) and would

reveal if semantic processing already occurs immediately

after the initial presentation of the scene.

In the same regression model, we also included the

onsets of all fixations made on the scene. Fixation onsets

were modeled with the formula

FRP ∼ 1 þ Consistency * Type þ Sacc Amplitude

Thus, we predicted the FRP for each time point as a func-

tion of the semantic Consistency of the target object

(consistent vs. inconsistent; consistent as the reference

level) in interaction with the Type of fixation (critical

fixation vs. nontarget fixation; nontarget fixation as the

reference level). In this model, any FRP consistency

effects elicited by the pretarget or target fixation would

appear as an interaction between Consistency and

Fixation Type. In addition, we included the incoming

Saccade Amplitude (in degrees of visual angle) as a con-

tinuous linear covariate to control for the effect of sac-

cade size on the FRP waveform.2 Thus, the full model

was as follows:

fERP ∼1 þ Consistency;

FRP ∼ 1 þ Consistency * Type þ Sacc Amplitudeg

This regression model was then solved for the betas using

the LSMR algorithm in MATLAB (without regularization).

The deconvolution model specified by the formula

above was run twice: In one version, we treated the pre-

target fixation (t − 1) as the critical fixation; in the other

version, we treated the target fixation (t) as the critical

fixation. In a given model, all fixations but the critical

ones were defined as nontarget fixations. FRPs for fixa-

tion t − 1 and for fixation t were estimated in two sepa-

rate runs of the model, rather than simultaneously within

the same model, because the estimation of overlapping

activity was much more stable in this case. In other

words, although the deconvolution method allowed us

to control for much of the overlapping brain activity from

other fixations, we were not able to use the model to di-

rectly separate the (two) N400 consistency effects elicited

by the fixations t − 1 and t.3

Both runs of the model (the one for t − 1 and t) also

yield an estimate for the scene-onset ERP, but because

the results for the scene-onset ERP were virtually identi-

cal, we present the betas from the first run of the model.

The average number of events entering the model per

participant was 65.3 and 73.0 for scene onsets (consistent

and inconsistent conditions, respectively), 883.5 and 912.4

for nontarget fixations (nt), 59.8 and 61.8 for pretarget fix-

ations (t − 1), and 65.3 and 73.0 for target fixations (t).

578

Journal of Cognitive Neuroscience

Volume 32, Number 4

D

o

w

n

l

o

a

d

e

d

l

l

/

/

/

/

j

t

t

f

/

i

t

.

:

/

/

f

r

o

m

D

o

h

w

t

n

t

p

o

:

a

/

d

/

e

d

m

i

f

r

t

o

p

m

r

c

h

.

p

s

i

l

d

v

i

r

e

e

r

c

t

c

.

m

h

a

i

e

r

d

.

u

c

o

o

m

c

n

/

j

a

o

r

t

c

i

c

n

e

/

-

a

p

r

d

t

i

3

2

c

l

4

e

5

-

7

p

1

d

f

2

0

/

1

3

3

2

2

/

4

4

7

/

5

o

7

c

1

n

/

_

a

1

_

8

0

6

1

1

5

2

0

7

4

6

p

/

d

j

o

b

c

y

n

g

_

u

a

e

_

s

0

t

1

o

5

n

0

0

4

8

.

p

S

d

e

f

p

e

b

m

y

b

e

g

r

u

2

e

0

s

2

t

3

/

j

f

.

t

/

o

n

0

5

M

a

y

2

0

2

1

Baseline placement for FRPs. Another challenging issue

for free-viewing EEG experiments is the choice of an ap-

propriate neutral baseline interval for the FRP waveforms

(Nikolaev et al., 2016). Baseline placement is particularly

relevant for experiments on extrafoveal processing where

we do not know in advance when EEG differences will

arise and whether they may already develop before fixation

onset.

For the pretarget fixation t − 1 and nontarget fixations nt,

we used a standard baseline interval by subtracting the mean

channel voltages between −200 and 0 msec before the

event (note that the saccadic spike potential ramping up

at the end of this interval was almost completely removed

by our ICA procedure; see Supplementary Figure S1). For

fixation t, we cannot use such a baseline because semantic

processing may already be ongoing by the time the target

object is fixated. Thus, to apply a neutral baseline to

fixation t, we subtracted the mean channel voltages in

the 200-msec interval before the preceding fixation t − 1

also from the FRP aligned to the target fixations t (see

Nikolaev et al., 2016, for similar procedures). The scene-

onset ERP was corrected with a standard prestimulus

baseline (−200 to 0 msec).

Group statistics for EEG (second level of analysis). To

perform second-level group statistics, averaged EEG

waveforms at the single-participant level (“regression

ERPs”) were reconstructed from the beta coefficients of

the linear deconvolution model. These regression-based

ERPs are directly analogous to participant-level averages

in a traditional ERP analysis (Smith & Kutas, 2015a). We

then used two complementary statistical approaches to

examine consistency effect in the EEG at the group level:

linear mixed models and a cluster-based permutation test.

LMMs were

LMM in a priori defined time windows.

used to provide hypothesis-based testing motivated by exist-

ing literature. Specifically, we adopted the spatio-temporal

definitions by Võ and Wolfe (2013) and compared the con-

sistent and inconsistent conditions in the time windows

from 250 to 350 msec (early effect) and 350 to 600 msec

(late effect) at a midcentral ROI of nine electrodes (compris-

ing FC1, FCz, FC2, C1, Cz, C2, CP1, CPz, and CP2). Because

the outputs provided by the linear deconvolution model

(the first-level analysis) are already aggregated at the level

of participant averages, the only predictor included in these

LMMs was the Consistency of the object. Furthermore, to

minimize the risk of Type I error (Barr, Levy, Scheepers,

& Tily, 2013), we started with a random effects structure

with Participant as random intercept and slope for the

Consistency predictor. This random effects structure was

then evaluated and backwards-reduced using the step func-

tion of the lmerTest package (Kuznetsova, Brockhoff, &

Christensen, 2017) to retain the model that was justified

by the data; that is, it converged, and it was parsimonious

in the number of parameters (Matuschek, Kliegl, Vasishth,

Baayen, & Bates, 2017).

It is still largely unknown to

Cluster permutation tests.

what extent the topography of traditional ERP effects trans-

lates to natural viewing. Therefore, to test for consistency

effects across all channels and time points, we additionally

applied the Threshold-Free Cluster Enhancement (TFCE)

procedure developed by Smith and Nichols (2009) and

adapted to EEG data by Mensen and Khatami (2013;

http://github.com/Mensen/ept_TFCE-matlab). In a nutshell,

TFCE is a nonparametric permutation test that controls for

multiple comparisons across time and space, while maintain-

ing relatively high sensitivity (e.g., compared with a

Bonferroni correction). Its advantage over previous cluster

permutation tests (e.g., Maris & Oostenveld, 2007) is that

it does not require the experimenter to set an arbitrary

cluster-forming threshold. In the first stage of the TFCE pro-

cedure, a raw statistical measure (here, t values) is weighted

according to the support provided by clusters of similar

values at surrounding electrodes and time points. In the

second stage, these cluster-enhanced t values are then

compared with the maximum cluster-enhanced values ob-

served under the null hypotheses (based on n = 2000

random permutations of the data). In the present article

(Figures 4 and 5), we not only report the global result of

the test but also plot the spatio-temporal extent of the

first-stage clusters, because they provide some indication

about which time points and electrodes likely contributed

to the overall significant effect established by the test.

D

o

w

n

l

o

a

d

e

d

l

l

/

/

/

/

j

f

/

t

t

i

t

.

:

/

/

f

r

o

m

D

o

h

w

t

n

t

p

o

:

a

/

d

/

e

d

m

i

f

r

t

o

p

m

r

c

h

.

p

s

i

l

d

v

i

r

e

e

r

c

t

c

.

m

h

a

i

e

r

d

.

u

c

o

o

m

c

n

/

j

a

o

r

t

c

i

c

n

e

/

-

a

p

r

d

t

i

3

2

c

l

4

e

5

-

7

p

1

d

f

2

0

/

1

3

3

2

2

/

4

4

7

/

5

o

7

c

1

n

/

_

a

1

_

8

0

6

1

1

5

2

0

7

4

6

p

/

d

j

o

b

c

y

n

g

_

u

a

e

_

s

0

t

1

o

5

n

0

0

4

8

.

p

S

d

e

f

p

e

b

m

y

b

e

g

r

u

2

e

0

s

2

t

3

/

j

/

.

t

f

o

n

0

5

M

a

y

2

0

2

1

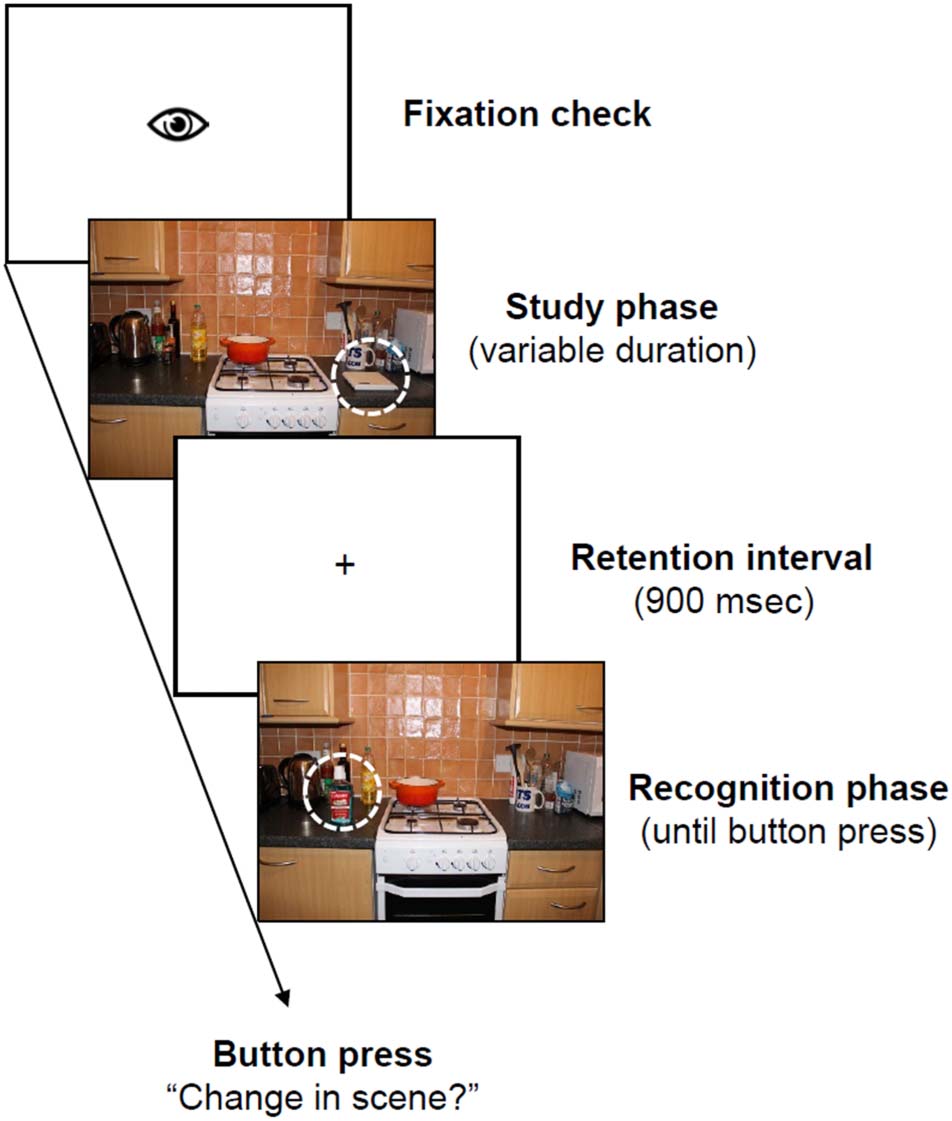

Figure 3. Eye-movement correlates of early overt attention toward

consistent and inconsistent critical objects. (A) Cumulative probability

of fixating the critical object as a function of the ordinal fixation number

on the scene. Blue solid line = consistent object; red dashed line =

inconsistent object. (B) Probability of fixating the critical object

immediately, that is, with the first fixation after scene onset. (C) Latency

until fixating the critical object for the first time. (D) First-pass gaze

duration for the critical object, that is, the sum of all fixation durations

from first entry to first exit. The size of the boxplots (B–D) represent

the 25th and 75th percentiles of the measure (lower and upper

quartiles). Dots indicate observations lying beyond the extremes of the

whiskers. Cons. = consistent; Incon. = inconsistent.

Coco, Nuthmann, and Dimigen

579

Table 2. Cumulative Probability of Having Fixated the Critical Object as a Function of the Ordinal Number of Fixations on the Scene

(Binomial Probit)

Predictor

Intercept

Nr. Fixation

Consistency

Consistency × Nr. Fixation

β

−1.04

−2.02

0.17

−0.72

Cumulative Probability of First Fixation

SE

0.02

0.06

0.03

0.09

z Value

−50.2

−35.5

5.9

−8.1

Pr (>|z|)

.00001

.00001

.00001

.00001

The centered predictors are Consistency (konsistent: −0.5, inconsistent: 0.5) and Number of Fixation (Nr. Fixation).

D

Ö

w

N

l

Ö

A

D

e

D

l

l

/

/

/

/

J

T

T

F

/

ich

T

.

:

/

/

F

R

Ö

M

D

Ö

H

w

T

N

T

P

Ö

:

A

/

D

/

e

D

M

ich

F

R

T

Ö

P

M

R

C

H

.

P

S

ich

l

D

v

ich

R

e

e

R

C

T

C

.

M

H

A

ich

e

R

D

.

u

C

Ö

Ö

M

C

N

/

J

A

Ö

R

T

C

ich

C

N

e

/

–

A

P

R

D

T

ich

3

2

C

l

4

e

5

–

7

P

1

D

F

2

0

/

1

3

3

2

2

/

4

4

7

/

5

Ö

7

C

1

N

/

_

A

1

_

8

0

6

1

1

5

2

0

7

4

6

P

/

D

J

Ö

B

C

j

N

G

_

u

A

e

_

S

0

T

1

Ö

5

N

0

0

4

8

.

P

S

D

e

F

P

e

B

M

j

B

e

G

R

u

2

e

0

S

2

T

3

/

J

.

/

T

F

Ö

N

0

5

M

A

j

2

0

2

1

Please note, Jedoch, that unlike the global test result,

these first-stage values are not stringently controlled for

false positives and do not establish precise effect onsets

or offsets (Sassenhagen & Draschkow, 2019). We report

them here as a descriptive statistic.

Endlich, for purely descriptive purposes and to provide a

priori information for future studies, we also plot the 95%

between-participant confidence interval for the consis-

tency effects at the central ROI (corresponding to sample-

by-sample paired t testing without correction for multiple

comparisons; see also Mudrik et al., 2014) in Figures 4

Und 5.

ERGEBNISSE

Task Performance (Change Detection Task)

After the recognition phase, participants pressed a button

to indicate whether or not a change had taken place within

the scene. Response accuracy in this task was high (M =

85.0 ± 5.16%) and did not differ as a function of whether

the study scene contained a consistent (84.6 ± 5.28%) oder

an inconsistent (85.3 ± 5.12%) target object.

Eye-movement Behavior

Figure 3A shows the cumulative probability of having fixat-

ed the target object as a function of the ordinal number of

fixation and semantic consistency, und Tisch 2 reports the

corresponding GLM coefficients. We found a significant

main effect of Consistency; overall, inconsistent objects

were looked at with a higher probability than consistent

Objekte. Wie erwartet, the cumulative probability of look-

ing at the critical object increased as a function of the

Ordinal Number of Fixation. There was also a significant

interaction between the two variables.

Complementing this global analysis, we analyzed the

very first eye movement during scene exploration to as-

sess whether observers had immediate extrafoveal access

to object–scene semantics (Loftus & Mackworth, 1978).

The mean probability of immediate object fixation was

12.93%; we observed a numeric advantage of inconsistent

objects over consistent objects (Abbildung 3B), but this differ-

ence was not significant (Tisch 3). The latency to first fix-

ation on the target object is another measure to capture

the potency of an object in attracting early attention in ex-

trafoveal vision (z.B., Võ & Henderson, 2009; Underwood

& Foulsham, 2006). This measure is defined as the time

elapsed between the onset of the scene image and the

first fixation on the critical object. Wichtig, this latency

was significantly shorter for inconsistent as compared with

consistent objects (Figure 3C, Tisch 3).

Darüber hinaus, we analyzed gaze duration as a measure of

foveal object processing time (z.B., Henderson et al.,

1999). First-pass gaze duration for a critical object is de-

fined as the sum of all fixation durations from first entry

to first exit. On average, participants looked longer at in-

konsistent (519 ms) than consistent (409 ms) Objekte

Tisch 3. Probability of Immediate Fixation, Latency to First Fixation, and Gaze Duration

Probability of Immediate Fixation

Latency to First Fixation

Gaze Duration

Predictor

Intercept

Consistency

β

−2.82

0.22

SE

0.18

0.16

z

−15.36***

1.38

β

1,774.4

−246.4

SE

77.2

64.0

T

β

SE

T

23.0***

455.5

36.55

23.33***

−3.85***

105.0

14.83

7.08***

The simple coded predictor is Consistency (consistent = −0.5, inconsistent = 0.5). We report the β, standard error, z value (for binomial link),

and t value. Asterisks indicate significant predictors.

***P < .001.

580

Journal of Cognitive Neuroscience

Volume 32, Number 4

D

o

w

n

l

o

a

d

e

d

l

l

/

/

/

/

j

t

t

f

/

i

t

.

:

/

/

f

r

o

m

D

o

h

w

t

n

t

p

o

:

a

/

d

/

e

d

m

i

f

r

t

o

p

m

r

c

h

.

p

s

i

l

d

v

i

r

e

e

r

c

t

c

.

m

h

a

i

e

r

d

.

u

c

o

o

m

c

n

/

j

a

o

r

t

c

i

c

n

e

/

-

a

p

r

d

t

i

3

2

c

l

4

e

5

-

7

p

1

d

f

2

0

/

1

3

3

2

2

/

4

4

7

/

5

o

7

c

1

n

/

_

a

1

_

8

0

6

1

1

5

2

0

7

4

6

p

/

d

j

o

b

c

y

n

g

_

u

a

e

_

s

0

t

1

o

5

n

0

0

4

8

.

p

S

d

e

f

p

e

b

m

y

b

e

g

r

u

2

e

0

s

2

t

3

/

j

/

t

.

f

o

n

0

5

M

a

y

2

0

2

1

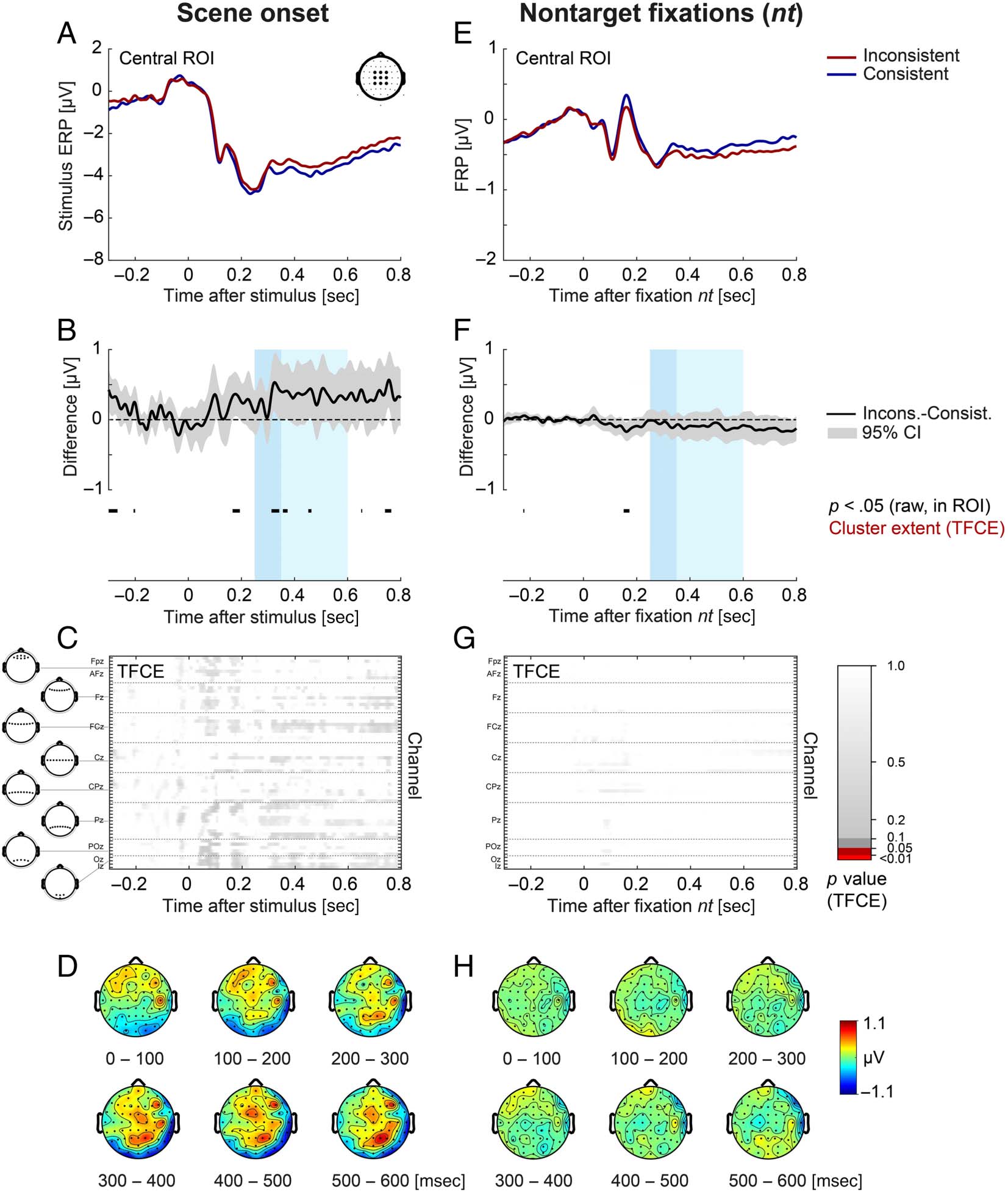

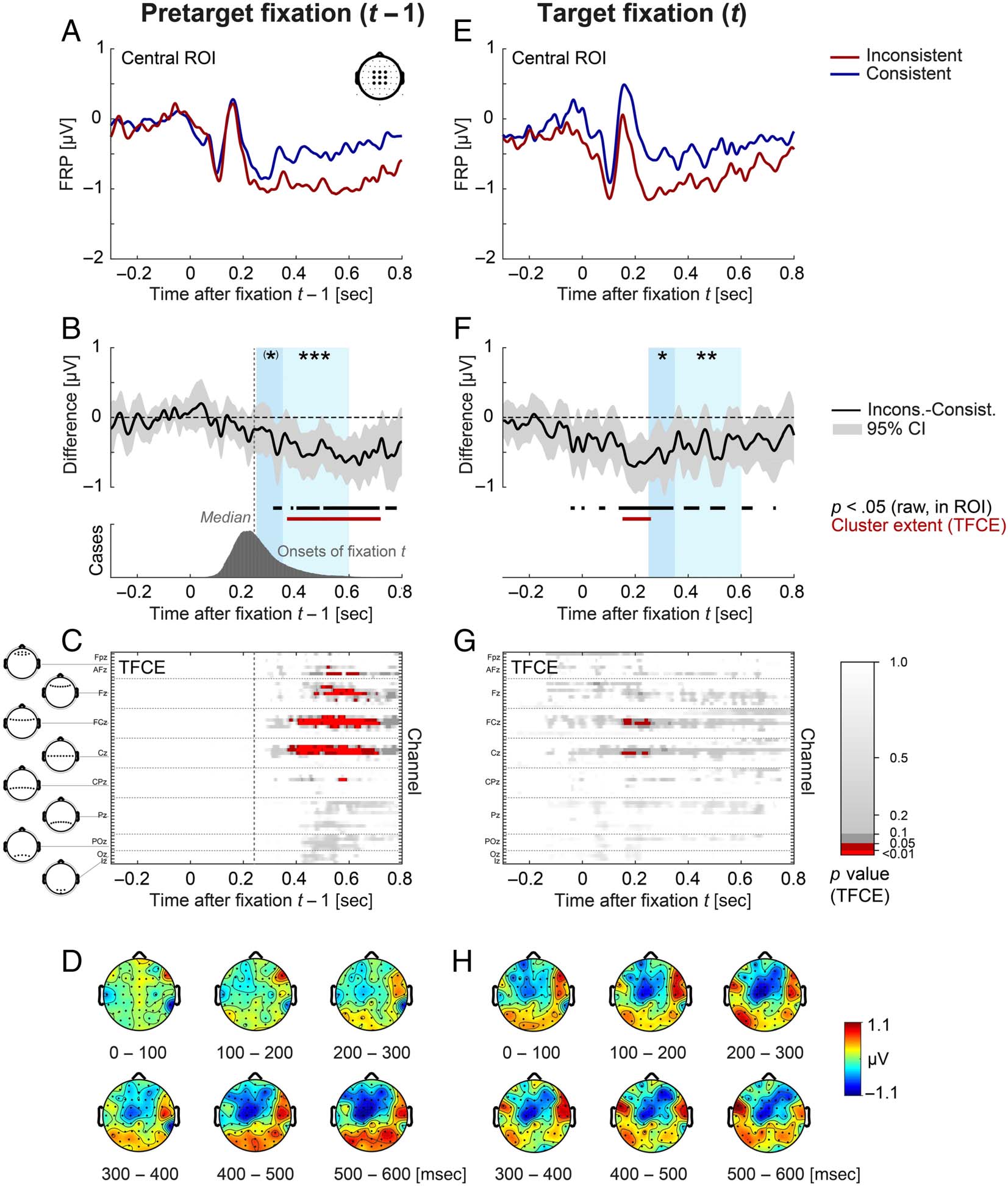

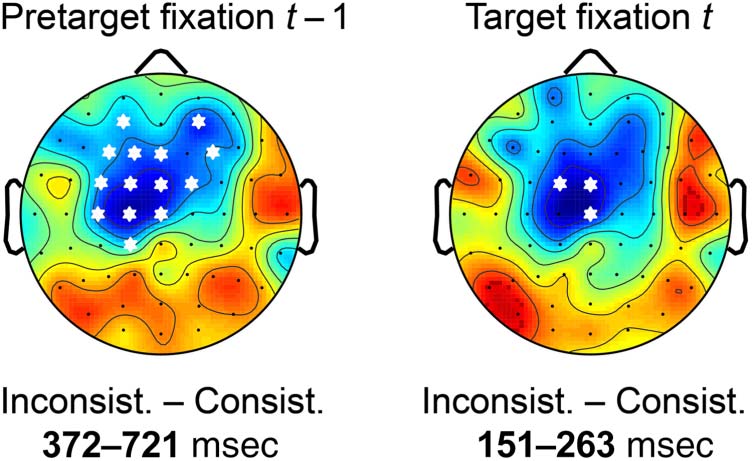

Figure 4. Stimulus ERP aligned to scene onset (left) and FRP aligned to nontarget fixations (right) as a function of object–scene consistency. (A, E)

Grand-averaged ERP/FRP at the central ROI (composed of electrodes FC1, FCz, FC2, C1, Cz, C2, CP1, CPz, and CP2). Red lines represent the

inconsistent condition, and blue lines represent the consistent condition. (B, F) Corresponding difference waves (inconsistent minus consistent) at

the central ROI. Gray shading illustrates the 95% confidence interval (without correction for multiple comparisons) of the difference wave, with

values outside the confidence interval also marked in black below the curve. The two windows used for LMM statistics (250–350 and 350–600 msec)